Recent years have seen 3D imaging grow in importance within the machine vision industry. The shortlist for this year’s Vision Awards at the Stuttgart trade fair is testament to how the technology behind 3D has advanced – and this looks set to continue and present a number of new use cases.

According to Raymond Boridy, product manager at Teledyne Dalsa, many applications that were previously not possible have been opened up thanks to 3D.

‘The sensors are more sophisticated now,’ he explained. ‘They are more accurate, they have less noise, they are faster and there is also the development of embedded processors, along with field programmable gate arrays (FPGAs). If you combine all of these technologies, you can build a pretty powerful laser profiler or structured light system.’

Similarly, Dr Tobias Henzler, 3D imaging technology specialist at Stemmer Imaging sees technological advances driving new applications. ‘One reason that 3D has taken a big step forward is the power of computing. In embedded devices it is also increasing and, naturally, with 3D data you have more data that you have to process than with 2D and you have to do more complex calculations to get to the results,’ he said. ‘The second positive point is that sensors have got much faster... [whereas in the past] 3D systems have been quite slow. Those two facts together help more and more applications be solved in 3D.’

Advancing technology

One company that has been working on 3D technology is Photoneo – its latest 3D camera to head to market, using the company’s patented parallel structured light technology implemented by a custom CMOS image sensor, is one of those products to make the Vision Awards shortlist.

‘We also make product software,’ explained Branislav Puliš, VP of sales and marketing. ‘It is mainly focused on robotic applications – the main application is bin picking. We also have software where robotic integrators are able to set up bin picking without us. It means that inside the bin picking software is a localisation algorithm, and based on the CAD file, we are able to localise parts in the cloud. Then there is also the path planner for the robot and all the things that you are able to set up, such as the gripping point to point. Everything is inside the software.’

Stephan Kieneke, product manager 3D sensors at Automation Technology, has seen a wider range of applications for 3D emerge, even compared to just two years ago.

‘When I started in our company,’ he explained, ‘I didn’t believe there could be so many applications for laser triangulation; the typical main use is inline inspection for assemblies.’

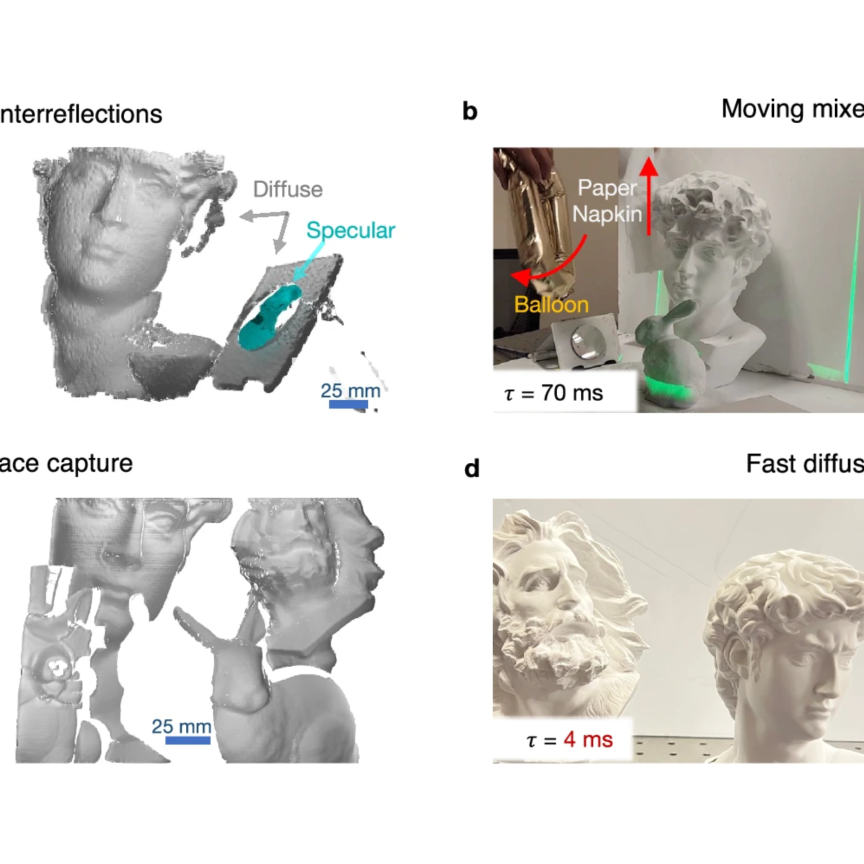

More applications are being opened up thanks to advances in 3D vision

Automation Technology has supplied triangulation sensors for measuring the thickness of smartphone batteries. According to Kieneke, smartphone manufacturers are trying to fit larger batteries in smaller housings, and 3D information can indicate whether the battery is functioning properly. He said: ‘With new 3D sensors, you get higher resolution, so you can easily detect really small defects in batteries, but also in the housing, to see if the electronic boards fit. This is something new and also a boost for laser triangulation sensors. We have sold a number of these sensors over the last few years, as have our competitors in the Asian market, where you have the main companies that produce the smartphones.’

Teledyne Dalsa’s Boridy says the benefits of 3D imaging are varied. ‘It is mainly used in the inspection of parts, and those parts can be related to industries from food to metal to semiconductors,’ he explained. ‘It is also useful for quality assurance and validations, and very useful for measurements that 2D cannot give us. Often, you need to verify parts or tolerances and you need to inspect if there are parts missing.

‘For example, if you’re inspecting a BGA chip and want to know if the balls are all aligned at the same level or if there are any missing, 2D can do that to a certain degree, but it cannot give the height if the balls are all at the same height. 3D is able to give you that, so it complements 2D.’

Limitations in 2D

Henzler concurs. ‘You need a 3D system if you want to measure height or deformations of freeform parts with freeform surfaces,’ he said. ‘There is no way to set up a 2D system that readily detects all the defects of such a part, especially if it’s not just a scratch but a variation of the thickness of the material somewhere on the part – you cannot detect this with 2D.

‘Another point is that if you have very low contrast, for instance, when inspecting rubber tyres, it’s very difficult to inspect this with 2D cameras. It’s much easier if you just take the height to create the contrast in the image; this is a typical application where 3D is needed. A third one is related to robotics, to pick and place applications. There is an intense development of new camera and software technologies to help solve these applications in an easier way. This helps to automate the industrial production processes. To bring Industry 4.0 to life you need a 3D camera to help robots see the objects they have to pick. This is where I see the biggest markets.’

For Puliš, 3D saves time as well. ‘Area scanners can scan the whole pallet at once,’ he explained. ‘So, in 400 to 500 milliseconds you are able to scan the whole area. We use laser projection, which allows immunity to ambient light and is quite fast. Basically, when working with 2D, you need special light, and you really need to calibrate the whole system, which is something that is quite complicated. 3D devices are pre-calibrated and each scanner is pre-calibrated for its volume.

‘When something happens in the application, you don’t need to re-calibrate the whole system, so you can save time in these cases. In 3D you have one more dimension, which means that for some applications, you really can save time in application development.’

Height measurement

Referring to what he says are the four main technological drivers of 3D imaging – laser triangulation, structured light, stereoscopy and time of flight – Boridy believes each has its own strengths and weaknesses, and therefore suitability for differing applications.

‘For example, if you want to do robotic bin picking, you want to source small parts in a big bag, so you use time of flight. Time of flight doesn’t have the resolution and accuracy of others but it can go faster [with] rough 3D measurements. If you want to do very precise measurements with high resolution, either use laser triangulation or structured light.’

Automation Technology’s C2 series of 3D cameras feature GigE vision interface.

‘In the years before, there was no demand for laser triangulation,’ added Kieneke. ‘Then 3D printing was used for prototyping – even in our companies we have used 3D printers in the last couple of years for designing housings for the sensors. In the past they were produced in Asia – the designer made drafts and we could wait a couple of weeks before even realising if there were mistakes. Now, with 3D printing, it [can] still take days to finish but it is much less than the six to eight weeks.

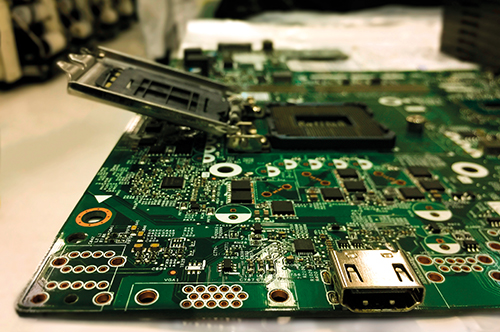

‘Then there is the trend for PCB inspection. We have a customer who is building automated optical inspection systems and who previously used only traditional 2D imaging. Now it’s getting more popular to have 3D cameras or 3D imaging for PCB inspection. A customer told us at the beginning [the results were] “only ok”, but one of their competitors used 3D imaging in their marketing, and it turned out that the end customers had a requirement for it. Now the customer says “we really only want to have 3D for PCB inspection.” They are selling a lot of sensors and a lot of machines.’

Future developments

With the technology behind 3D imaging becoming more sophisticated in recent years, has it now reached its pinnacle? Henzler believes not.

‘There are two paths that need to be followed to make 3D systems become more user friendly,’ he said. ‘One is to increase the resolution or field of view. There will be developments in the next few years for high resolution, high fields of view, faster sensors that really can image quickly and precisely. The second part is to make the software and configuration more user-friendly, so that the system integrator or final customer can easily and quickly develop the application or put it into place, to reduce the time to market. I’d like this to be a short-term development; I think it needs to be maybe in the next two years.

‘Often 3D SW algorithms are present just in a programming library, and one has to program everything around. The task is to make that much easier for the system integrator and to be able to configure and calibrate the system and its parameters quickly and put it into place. Especially on the software side, this is an important task because 3D is different from 2D, not just because it’s a dimension more; there are completely different algorithms used to process these images.

‘We are already seeing some products where ease of use takes centre stage. A good example is the LMI Gocator that focuses on an easy user interface and is constantly evolving making difficult applications easy. For the challenge of high-speed and higher resolution needs, we see strong developments from our partner Automation Technology.’

Puliš expects 3D technology to continue evolving. ‘I think there are still some improvements. This technology has some limitations, when you take into account the amount of spend to light and so on. There are some possibilities but for now, these devices are still quite unique for some applications where robots need to pick something. I think that now there is more demand for this kind of robotic application because there are some problems with labour – there are not enough people.

‘We must look for new ways of automation, something that is really pushing the systems that are communicating directly with the robot. This is the way that 3D is moving. This mainly applies to automotive and suppliers to automotive, but in general, [for]every assembly process or logistics, there are not enough people.’

Room for improvement

Likewise, Boridy thinks that there is still room for development: ‘3D is going to have more coverage in the future because the technology is growing and getting better and better. More applications will start to use 3D on a lot of applications and complement on others. AI will be part of this future, so will control 3D to do things that were impossible a couple of years ago. The embedded systems are getting better and faster, as well as smaller, lower cost, so all these technologies will enable companies to buy pretty sophisticated 3D systems for a reasonable cost. Something that was difficult to have a couple of years ago.

‘Not only the cost, but the performance was not as good as today. We are gaining in both directions. For example, if you wanted to verify car parts like doors or welding, 10 years ago, 2D was the only tool that gave the dimensions of the whole, the placement of the whole and the type of welding etc. Today, with 3D, because we can go through all the metric measurements like X, Y, Z rotation, 2D can be completely forgotten. I’m not saying that 2D is going to disappear as a repertoire, what I’m saying is that 3D is going to increase dramatically in the future.’

There is a new trend for PCB inspection using 3D

‘There are a lot of electronic trends,’ said Kieneke. ‘The processors get more powerful with less heat dissipation and less power consumption, because 3D imaging means you need computational power.

‘If you want to put this in a small form factor it gets very hot, so with new architectures and processors, they have less power consumption but are less powerful compared with smartphone processors. This is a trend for embedded vision. They use Arm processors, which have very good power consumption, so there will be a lot more companies entering the market.

‘What’s also very interesting is that really big global companies, like Intel, are interested in the imaging vision market. In the past it was only the consumer market but now they are really entering. 3D imaging was also expensive, the cameras were expensive. Now, big companies offer good price performance ratios, and a real-sense camera is less than $200.

‘But it is also more new opportunities for new markets where the price and cost are very sensitive. And then it was mostly a compromise of quality and cost. Canon also has an imaging and machine vision solution. Normally 3D is still niche, but companies like Canon and Intel are entering the market and there are a lot of Asian companies entering the market for 3D imaging. It shows there is the need and definitely a trend.’

The end of 2D?

Henzler reasons that 2D will not disappear altogether. ‘I don’t think that this will happen,’ he said. ‘A 2D system is much easier to set up, and customers do not expect a 2D system to be metrically calibrated so if you talk about 3D, the initial assumption of the customer is that they get metrically calibrated data. It might not be necessary and you could make use of a 3D system, even if it is not calibrated.

‘Plenty of applications can be solved with 2D imaging. It is not a case that one replaces the other, it is something complementary. Some applications can be solved with 2D, or some are more difficult and can be solved with 3D. This is what I see. I don’t know what the future will bring, but I would be astonished if in 10 years’ time there is not a 2D system any longer on the market.’

When it comes to 3D, for Boridy, the race has already begun. ‘Now it is a question of who can develop the most and best products in a timely manner,’ he explained. ‘Time to market is something to consider and cost is always important – these are the two drivers.

‘Because the technology is available to everybody now, a lot of companies can do quality work and quality machines, but some companies will succeed better than others because they will be faster for the time to market and the cost will be lower. The customer will surely benefit and humanity in general will benefit.’