Researchers from North Carolina State University and the University of Delaware have developed an algorithm that can reconstruct hyperspectral images using less data.

The algorithm, together with new hardware, makes it possible to acquire hyperspectral images in less time and to store those images using less memory.

The work was published in the IEEE Journal of Selected Topics in Signal Processing.

Hyperspectral imaging holds promise for use in fields ranging from security and defence to environmental monitoring and agriculture. There is also a market in industrial inspection, for food and pharmaceutical production, for instance.

Hyperspectral imaging creates images across dozens or hundreds of wavelengths, and gives a chemical fingerprint of an object in a scene. However, an RGB image with millions of pixels across three wavelengths might be one megabyte in size, but in hyperspectral imaging, the image file could be at least an order of magnitude larger. This can create problems for storing and transmitting data.

In addition, capturing hyperspectral images across dozens of wavelengths can be time-consuming. ‘It can take minutes,’ said Dror Baron, an assistant professor of electrical and computer engineering at NC State and one of the senior authors of a paper describing the new algorithm.

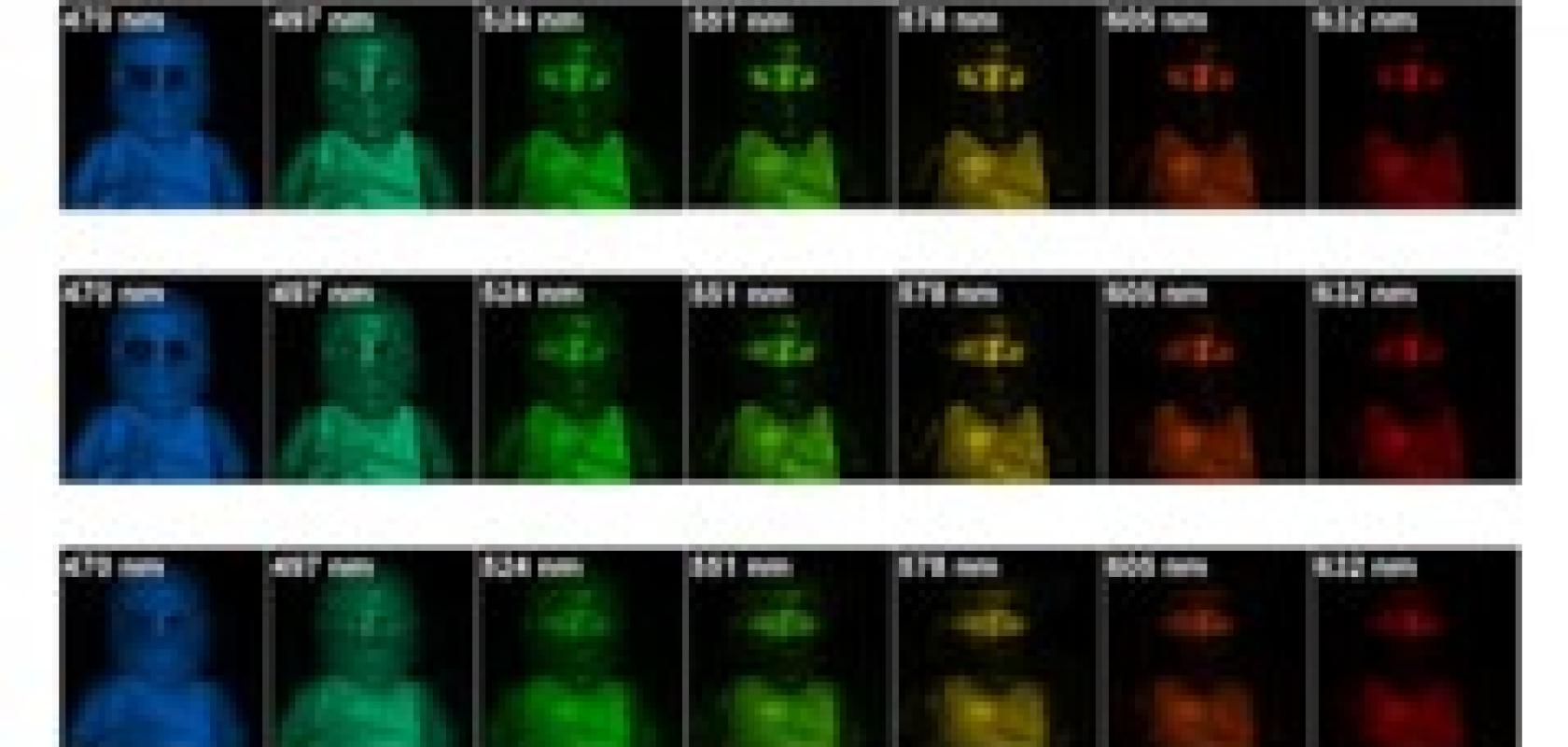

In recent years, researchers have developed new hyperspectral imaging hardware that can acquire the necessary images more quickly and store the images using significantly less memory. The hardware takes advantage of ‘compressive measurements’, which mix spatial and wavelength data in a format that can be used later to reconstruct the complete hyperspectral image.

But in order for the new hardware to work effectively, an algorithm is needed that can reconstruct the image accurately and quickly. ‘We were able to reconstruct image quality in 100 seconds of computation that other algorithms couldn't match in 450 seconds,’ Baron said. ‘And we're confident that we can bring that computational time down even further.’

The higher quality of the image reconstruction means that fewer measurements need to be acquired and processed by the hardware, speeding up the imaging time. And fewer measurements mean less data that needs to be stored and transmitted.

‘Our next step is to run the algorithm in a real world system to gain insights into how the algorithm functions and identify potential room for improvement,’ Baron said. ‘We're also considering how we could modify both the algorithm and the hardware to better complement each other.’

Related articles:

Hyperspectral imaging enabled for mass production

Further information:

Compressive Hyperspectral Imaging via Approximate Message Passing