The field of neuromorphic vision, where electronic cameras mimic the biological eye, has been around for some 30 years.

Neuromorphic cameras (also called event cameras) mimic the function of the retina, the part of the eye that contains light-sensitive cells. This is a fundamental change from conventional cameras – and why applications for event cameras for industry and research are also different.

Conventional cameras are built for capturing images and visually reproducing them.

They take a picture at certain amounts of time, capturing the field of vision and snapping frames at predefined intervals, regardless of how the image is changing. These frame-based cameras work excellently for their purpose, but they are not optimised for sensing or machine vision. They capture a great deal of information but, from a sensing perspective, much of that information is useless, because it is not changing.

Event cameras suppress this redundancy and have fundamental benefits in terms of efficiency, speed, and dynamic range. Event-based vision sensors can achieve better speed versus power consumption trade-off by up to three orders of magnitude. By relying on a different way of acquiring information compared with a conventional camera, they also address applications in the field of machine vision and AI.

Event camera systems can quickly and efficiently monitor particle size and movement

“Essentially, what we’re bringing to the table is a new approach to sensing information, very different to conventional cameras that have been around for many years,” says Luca Verre, CEO of Prophesee, a market leader in the field.

Whereas most commercial cameras are essentially optimised to produce attractive images, the needs of the automotive, industrial, Internet of Things (IoT) industries, and even some consumer products, often demand different performances. If you are monitoring change, for instance, as much as 90% of the scene is useless information because it does not change. Event cameras bypass that as they only monitor when light goes up or down in certain relative amounts, which produces a so-called “change event”.

In modern neuromorphic cameras, each pixel of the sensor works independently (asynchronously) and records continuously, so there is no downtime, even when you go down to microseconds. Also, since they only monitor changing data, they do not monitor redundant data. This is one of the key aspects driving the field forward.

Innovation in neuromorphic vision

Vision sensors typically gather a lot of data, but increasingly there is a drive to use edge processing for these sensors. For many machine vision applications, edge computation has become a bottleneck. But for event cameras, it is the opposite.

“More and more, sensor cameras are used for some local processing, some edge processing, and this is where we believe we have a technology and an approach that can bring value to this application,” says Verre.

“We are enabling fully fledged edge computing by the fact that our sensors produce very low data volumes. So, you can afford to have a cost-reasonable, low-power system on a chip at the edge, because you can simply generate a few event data that this processor can easily interface with and process locally.

“Instead of feeding this processor with tons of frames that overload them and hinder their capability to process data in real-time, our event camera can enable them to do real-time across a scene. We believe that event cameras are finally unlocking this edge processing.”

Making sensors smaller and cheaper is also a key innovation because it opens up a range of potential applications, such as in IoT sensing or smartphones. For this, Prophesee partnered with Sony, mixing its expertise in event cameras with Sony’s infrastructure and experience in vision sensors to develop a smaller, more efficient, and cheaper event camera evaluation kit. Verre thinks the pricing of event cameras is at a point where they can be realistically introduced into smartphones.

Another area companies are eyeing is fusion kits – the basic idea is to mix the capability of a neuromorphic camera with another vision sensor, such as lidar or a conventional camera, into a single system.

“From both the spatial information of a frame-based camera and from the information of an event-based camera, you can actually open the door to many other applications,” says Verre. “Definitely, there is potential in sensor fusion… by combining event-based sensors with some lidar technologies, for instance, in navigation, localisation, and mapping.”

Neuromorphic computing progress

However, while neuromorphic cameras mimic the human eye, the processing chips they work with are far from mimicking the human brain. Most neuromorphic computing, including work on event camera computing, is carried out using deep learning algorithms that perform processing on CPUs of GPUs, which are not optimised for neuromorphic processing. This is where new chips such as Intel’s Loihi 2 (a neuromorphic research chip) and Lava (an open-source software framework) come in.

“Our second-generation chip greatly improves the speed, programmability, and capacity of neuromorphic processing, broadening its usages in power and latency-constrained intelligent computing applications,” says Mike Davies, Director of Intel’s Neuromorphic Computing Lab.

BrainChip, a neuromorphic computing IP vendor, also partnered with Prophesee to deliver event-based vision systems with integrated low-power technology coupled with high AI performance.

It is not only industry accelerating the field of neuromorphic chips for vision – there is also an emerging but already active academic field. Neuromorphic systems have enormous potential, yet they are rarely used in a non-academic context. Particularly, there are no industrial employments of these bio-inspired technologies. Nevertheless, event-based solutions are already far superior to conventional algorithms in terms of latency and energy efficiency.

Working with the first iteration of the Loihi chip in 2019, Alpha Renner et al (‘Event-based attention and tracking on neuromorphic hardware’) developed the first set-up that interfaces an event-based camera with the spiking neuromorphic system Loihi, creating a purely event-driven sensing and processing system. The system selects a single object out of a number of moving objects and tracks it in the visual field, even in cases when movement stops, and the event stream is interrupted.

In 2021, Viale et al demonstrated the first spiking neuronal network (SNN) on a chip used for a neuromorphic vision-based controller solving a high-speed UAV control task. Ongoing research is looking at ways to use neuromorphic neural networks to integrate chips and event cameras for autonomous cars. Since many of these applications use the Loihi chip, newer generations, such as Loihi 2, should speed development. Other neuromorphic chips are also emerging, allowing quick learning and training of the algorithm even with a small dataset. Specialised SNN algorithms operating on neuromorphic chips can further help edge processing and general computing in event vision.

“The development of event-based cameras, inspired by the retina, enables the exploitation of an additional physical constraint – time. Due to their asynchronous course of operation, considering the precise occurrence of spikes, spiking neural networks take advantage of this constraint,” writes Lea Steffen and colleagues (‘Neuromorphic Stereo Vision: A Survey of Bio-Inspired Sensors and Algorithms’).

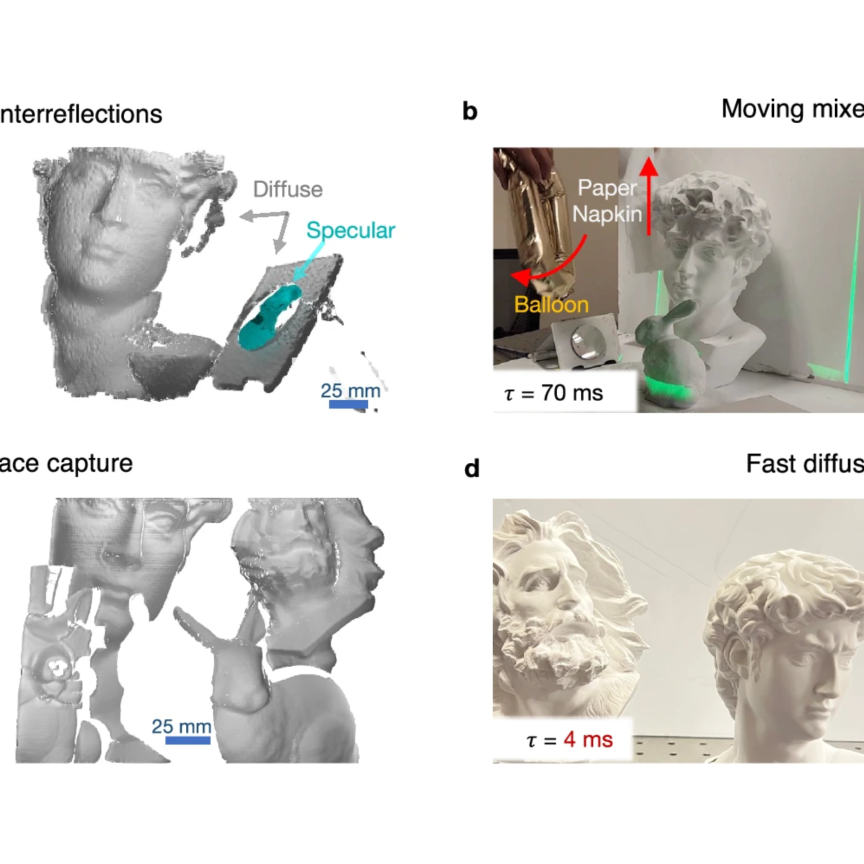

Lighting is another aspect the field of neuromorphic vision is increasingly looking at. An advantage of event cameras compared with frame-based cameras is their ability to deal with a range of extreme light conditions – whether high or low. But event cameras can now use light itself in a different way.

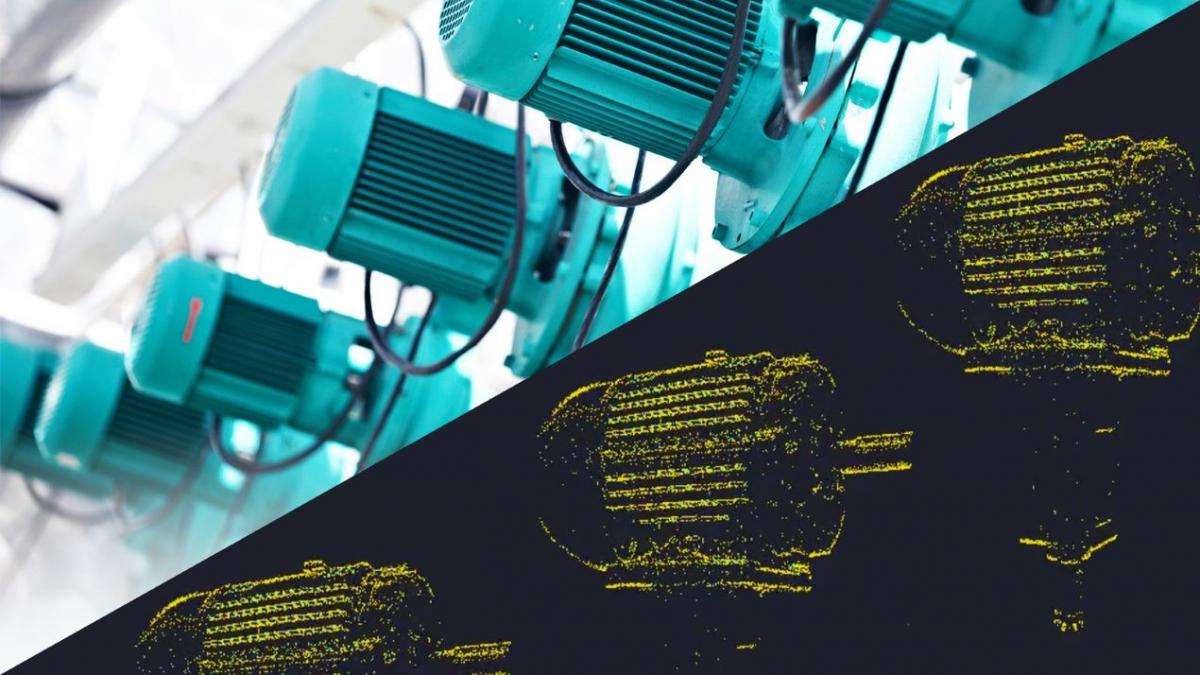

Prophesee and CIS have started work on the industry’s first evaluation kit for implementing 3D sensing based on structured light. This uses event-based vision and point cloud generation to produce an accurate 3D Point Cloud.

“You can then use this principle to project the light pattern in the scene and, because you know the geometry of the setting, you can compute the disparity map and then estimate the 3D and depth information,” says Verre. “We can reach this 3D Point Cloud at a refresh rate of 1kHz or above. So, any application of 3D tourism, such as 3D measurements or 3D navigation that requires high speed and time precision, really benefits from this technology. There are no comparable 3D approaches available today that can reach this time resolution.”

Industrial applications of event vision

Due to its inherent advantages, as well as progress in the field of peripherals (such as neuromorphic chips and lighting systems) and algorithms, we can expect the deployment of neuromorphic vision systems to continue – especially as systems become increasingly cost-effective.

Event vision can trace particles or monitor vibrations with low latency, low energy consumption, and relatively low amounts of data

We have mentioned some of the applications of event cameras here at IMVE before, from helping restore people’s vision to tracking and managing space debris. But in the near future perhaps the biggest impact will be at an industrial level.

From tracing particles or quality control to monitoring vibrations, all with low latency, low energy consumption, and relatively low amounts of data that favour edge computing, event vision is promising to become a mainstay in many industrial processes. Lowering costs through scaling production and better sensor design is opening even more doors.

Smartphones are one field where event cameras may make an unexpected entrance, but Verre says this is just the tip of the iceberg. He is looking forward to a paradigm shift and is most excited about all the applications that will soon pop up for event cameras – some of which we probably cannot yet envision.

“I see these technologies and new tech sensing modalities as a new paradigm that will create a new standard in the market. And in serving many, many applications, so we will see more event-based cameras all around us. This is so exciting.”

Lead image credit: Vector Tradition/Shutterstock.com