The Rosetta Philae lander successfully touched down on the comet 67P/Churyumov-Gerasimenko on 12 November 2014 in the climax of a 10-year journey. Even on its descent the Rosetta mission had begun sending back iconic images of the comet, the probe, and the orbiter from which it was released.

Ambitious space projects are now a possibility and they are becoming more common. In June 2010, the asteroid explorer Hayabusa completed its seven-year, 200 million-kilometre journey to the asteroid Itokawa, and returned to Earth. The Hayabusa was fitted with an optical sensor designed and manufactured by Hamamatsu Photonics, which was used to observe the surface condition of the asteroid.

Space exploration is delving deeper into the void, but back home on Earth amateur astronomers are providing mass coverage of our skies and often spotting astronomical events that would otherwise be missed by more dedicated professional observatories. The imaging systems used for ambitious projects such as the Rosetta mission must be able to cope with the extreme conditions found in space, while cameras for mass survey systems on the other hand must provide high enough resolution while staying affordable.

E2v manufactured five sensors for the Rosetta mission, out of roughly 20 instruments in total. Paul Jerram, head of engineering sensors at e2v, said: ‘All the visible image sensors are made by e2v, two were made in Chelmsford, and the other three were made in Grenoble.’

To survive the harsh environment of space, systems designers have to consider a number of potentially harmful phenomena. Jerram outlined the main issues: ‘There are all sorts of radiation in space, from gamma rays to protons to x-rays, and also a type of radiation known as heavy ions. There is quite a lot of radiation that can cause concern so you have to ensure that whatever you have designed is radiation hard and this requires a lot of testing. You have to think a bit about shock and vibration – particularly during the launch, as this is quite a violent period. However, most image sensors are mechanically robust enough to deal with this.’

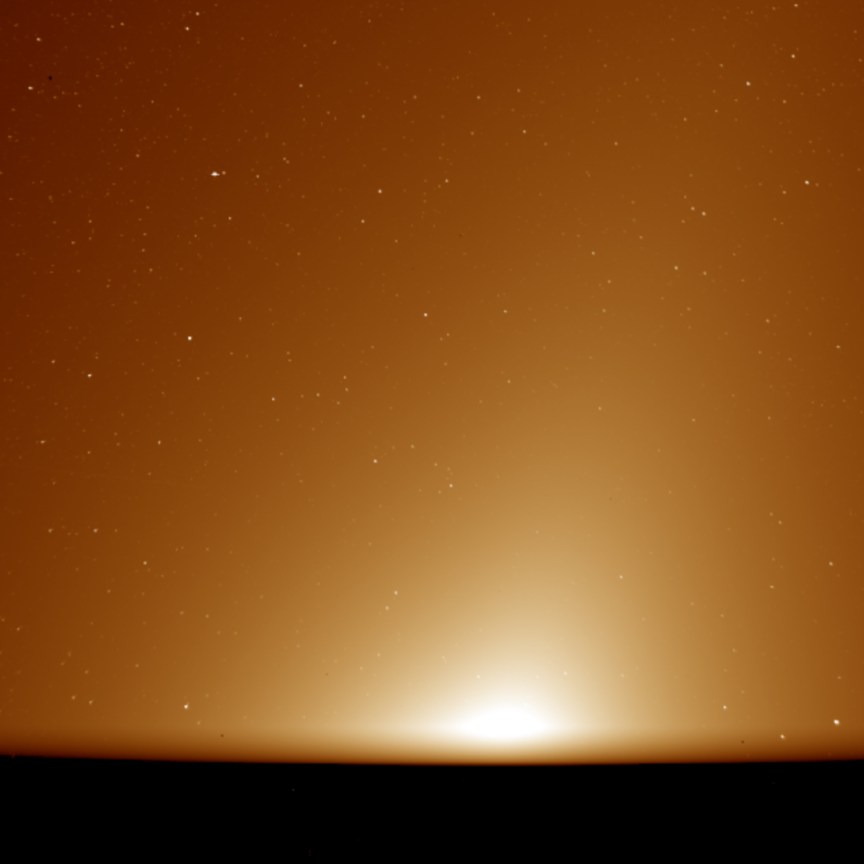

Jerram explained that temperature extremes also need to be considered. Rosetta is beyond the orbit of Jupiter and it’s very cold. The sensor has to be able to survive these low temperatures – deep space missions tend to be at -100°C for extended periods.

One other problem is ice potentially building up on the sensor or other instruments. Jerram explained that, even though space is a vacuum and ice shouldn’t be an issue, it can happen because of the big differences in temperature, even from one side of an instrument to the other. He elaborated: ‘Some things that sit in the sunlight can get quite hot and give out gases, whereas those parts out of the sunlight get very cold. If there is any residual water vapour it can condense on the cold parts. So actually, rather perversely, ice build-up can be an issue.’

To overcome this, the materials used have to be strictly regulated and specifications are in place to decide how much outgasing is allowed from a component. As the sensors e2v send out are made of silicon this is not too much of an issue. However, Jerram said that adhesives used to stick the sensor to its packaging can be a particular problem and that specific glues are chosen for their low outgas properties.

Every kilo sent into space increases the cost of the mission. On Philae, the sensors and the packaging sent were quite small, so the overall effect on the system wasn’t too noticeable. Whereas for Gaia, another ESA project e2v worked on, the focal plane was one metre by a half metre with 106 sensors. Here, the packaging is very important, and a specialised material called silicon carbide was used, which is lightweight and has good thermal conductivity.

He said: ‘If you are flying any sort of imager into space it has to have a high resolution, but it costs you a fortune to put a lens on the system and it can’t be very big. You have to make extremely sure that every single little particle of light you gather is detected at the output. We effectively have to have 100 per cent detection efficiency and a wide spectral band. They are more optical instruments than just imagers.’

In order to satisfy the required criteria, the budget for these space projects is of a different order to more terrestrial applications. Jerram observed: ‘In total, our business here has around 300 people that mostly do space missions; we are approaching 200 missions in total, and each mission is typically in the order of millions [of pounds].’

However, it is not just the budget that is bigger. The time span that these projects work on is far greater than most industrial applications. Jerram said: ‘Rosetta is an extreme example; a journey time of 10 years before it starts being used is very unusual. For something like the Hubble telescope or the solar observation systems that we provide, they tend to get launched and within three months they are up and running.’

However, Jerram said that for a typical programme, even for a relatively small mission, it can take around nine years to go from system design to launch, and as the complexity of the system goes up the time increases.

‘Once you have started you stay on the same path, even if there are technological advancements you don’t really change the initial design,’ he said. ‘By the time it is sent up, the technology might have advanced. However, the customers are, and have to be, very conservative regarding the technology. They will only fly something that you have proven to a very high degree and you have to have done a lot of testing to do this. It takes a long time to introduce new technologies.’

Spotting a supernova

Not all of the applications found in astronomy are as expensive as the likes of the Rosetta mission, and are carried out much closer to home. Ohio State University researcher Kris Stanek leads a team that has created an alarm system for supernovae and other bright stellar events by comparing multiple images of as much of the sky as possible. His team’s All-Sky Automated Survey for Supernovae (ASAS-SN) project is now responsible for spotting half of the recorded bright supernovae since it began operation.

‘We want to be able to observe the entire sky all of the time. Spotting supernovae is the main driving force but basically, we don’t pick spots to observe in the sky.’ The team initially collect data from an area and create a reference frame. By repeatedly rescanning each area and comparing the images, any bright stellar events that occur can be noticed. ‘We digitally subtract the two images and this will flag up any changes in the scene. Is there new light where there wasn’t before? Is there less light? This is what we are looking for.’

The team has taken more than 400,000 images to this point; 300 pictures are taken per camera per night and each unit can cover 2,500 square degrees per night. The aim in the future is to use 16 units to cover 40,000 square degrees, which translates as the entire sky.

The team takes a 90-second exposure, moves the telescope and then takes another 90-second exposure. The readout is less than two seconds to capture images quickly, so cameras are used with high quantum efficiency – around 80 per cent – and a fast readout. The sensor had to be cooled, according to Stanek, to give low dark counts and reliable imaging. Stanek uses cameras from Finger Lake Instruments, distributed by Multipix Imaging.

The software used for the projects filters the two million images to look for activity in a certain area. It can reduce the number of images to a few hundred, which the team examine, and from this maybe a handful are actual events.

Stanek commented: ‘At the moment it is still quite human labour-intensive, but as we take more data we can introduce new filters and rules for the software to follow. We are starting to apply machine learning which means eventually we will only have to look at the most probable cases.

‘However, at least in the beginning, we do not want to be too clever. By introducing too many rules too early on, we may end up missing something. Some of the events we have observed are very subtle; we needed to learn how to go through the process before we can tell the computers to do it successfully.’

As a result, in the last sixth months the team has found half of the observed bright supernovae. Stanek stated: ‘Since we don’t have a list of areas to observe, we are seeing supernovae in galaxies that not many other people have planned to look at.’

There has been some unexpected finds for the ASAS-SN team. Last year the team observed an M-Dwarf – which is a type of low-mass, very common star – produce a solar flare in which they saw the star get 4,000 times brighter. ‘This was the biggest flare ever seen and it happened within the first few months of our operation,’ Stanek said.

In January, the team observed what is known as a tidal disruption event, which is where the central black hole swallows a star. ‘A handful of these have been seen before, but we managed to capture one just a few months into our operation. It was also the closest one yet. This was very encouraging because it showed how successful observing the entire sky is already producing more than we expected.

‘When you look at our images, the public might be a little disappointed; what you seen is a bunch of pixels and one gets a bit brighter. Our system notices the change and then lets us know that we should take a closer look at the region. After the system alerts us to an area, we use much bigger telescopes to investigate. So this is a very valuable thing; it’s essentially a recon mission.’

The team observed an 11 magnitude supernova event that was very bright. This meant amateur astronomers could focus in on the event and make observations because they are so easy to see. ‘We are the feeder to a great number of people, telling them where to look,’ Stanek added.

Meteorites hitting the moon

The use of amateur astronomers to help collect data is common and projects such as ASAS-SN put as much information into the public domain as possible. Thierry Legault is one such amateur and has had asteroids named after him; his images have been published in national and international newspapers, and received the Marius Jacquemetton award for photographic works from the Société Astronomique de France.

He explained the relationship between amateurs and professionals: ‘Amateurs offer time and numbers. Professional means are much more powerful, but they are very few and can’t satisfy all astrophysicists’ demands or needs.’

A good example is lunar impact surveys, observations of meteorites hitting the surface of the moon. Telescopes for viewing these don’t need to be large, but the events are rare, so surveying the moon continuously is necessary to record the number of impacts taking place.

Legault has taken a variety of different images, including the first and only amateur photo of a spacewalk. He explained: ‘I captured it with the same technique as planetary imaging – in video, at a focal length about seven metres – but with a specially modified motorised mount and software that is able to automatically track satellites at high speed. It’s almost unique in the world and needed two years of development and testing.’

He explained that techniques are very different between long-exposure for deep-sky and multi-frame video acquisition for the planets, ‘as different as photography of lions in Africa and sharks in the Pacific’. They differ in telescopes, focal lengths, cameras and also processing techniques.

To capture the images, Legault uses several different cameras: Canon and Sony DSLR for nightscapes, lunar quarters, and deep-sky images; astronomical monochrome video cameras for the planets, the Sun and lunar close-ups; and a monochrome CCD camera with filter wheel for deep-sky objects.

Legault said: ‘Amateurs use safe solar filters, some of them are cheap and others are much more expensive, ranging from €1,000 to several thousand euros, but show the solar prominences and flares.’

One of Legault’s most recognisable images is of the Atlantis shuttle and the International Space Station passing across the sun. Legault said: ‘Capturing the image in front of the Sun is easier than at night; you have to calculate the place and time of visibility, point the camera at the Sun and wait for the passage. This kind of event lasts less than one second, so with a DSLR you need to be very reactive and have a very accurate clock. I used a radio-synchronised watch for the ISS pass, but now I use a specially made GPS control box that triggers the camera automatically at a chosen time, because GPS modules are able to output an extremely precise time signal.’

Both Stanek and Legault said that they needed good cameras that were affordable. Stanek said: ‘It’s very hard to get funding for science in the USA at the moment. We were given the initial funding from the National Science Foundation to start the project, but we just can’t get any more money. This is very frustrating as it seems that the system is working great.’

However, now the team at Ohio is collecting data on half of the observed supernova, which Stanek hopes will help with the funding. He added: ‘One of these events we detected has been picked up by amateur astronomers, who in fact have bigger telescopes than we do. They have now been observing it for four months and there are 23,000 observations of a cataclysmic variable, and again, we just told them there is something bright in the sky and they take over from there.’