The global retail trade has reportedly experienced a turbulent time over the past few years, if you believe the mainstream news outlets’ regular stories on the number of high street shops closing.

The rise of internet shopping is often attributed as the reason behind the demise of bricks-and-mortar retailers. However, smarter physical stores are actually enhancing the consumer experience in the same way as their digital counterparts, with the help of machine vision.

Flir Systems has worked with large grocers and retailers in Western Europe, the UK and North America, on front-end service management systems, explained Adam Agress, global business development director at the firm’s integrated imaging solutions division. Retailers are trying to understand employee interaction, marketing analysis, and effectiveness of the product placement among other factors, Agress said.

One way in which machine vision has proven particularly useful in customer service applications is in cutting down waiting times at the checkout. Flir’s solution is a stereo camera – Flir purchased people-tracking 2D and 3D stereovision hardware from Brickstream when it bought Point Grey in 2016. The cameras give metrics on how many people are standing in a queue, how long they have been standing there, and if there’s a member of staff present at the till.

These data can be calculated in real time to show how ‘healthy’ the front-end of the shop is. Predictive analysis is also possible based on the historic trends of that particular store, to understand whether there is going to be more customers entering the shop and buying products at certain times of the day.

Machine vision systems can help when designing the layout of a store. Credit: Flir

The shop can begin to understand what the trends are, which can work in harmony with the store’s own workforce management system, a separate piece of software that will allocate the number of labour hours needed at the front end.

‘These two systems,’ said Agress – the retailer’s workforce management system along with the machine vision system – ‘work in tandem to gather the actual demand, which is what we’re doing. The information can then be pumped into the workforce management system, so that it becomes smarter, more intelligent and more customised to that particular store.’

Self-service

The system can also be applied to self-service checkouts.

‘How our solution works with those types of platforms,’ Agress said, ‘is very similar. There is information you can get directly from the self-checkout terminal itself, so it will know how many items it has scanned and what the average service times are for people standing in front of it. What it doesn’t know is demand. It doesn’t know whether all four self-checkouts are in use for an hour straight; it doesn’t know if there were 10 people or two people waiting in line to use them. As a result, our solution can measure the actual demand for those self-checkouts, so how many people are standing in a pack waiting for the next one to open up. That information can be fed back to the retail operations team to help them figure out if they have the right number of self-checkouts available.’

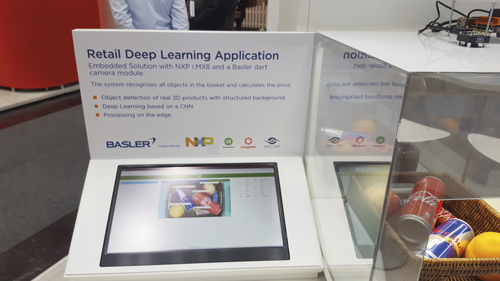

Self-service checkouts can provide their own set of problems for retailers, according to Gerrit Fischer, head of product market management at Basler. The company demonstrated at this year’s Embedded World trade show in Nuremberg – in partnership with NXP Semiconductors – an automated retail checkout terminal equipped with AI software to identify and classify products in a shopping basket, and display the pricing.

‘Among systems that will help to make the shopping experience easier are self-checkout systems,’ said Fischer. ‘Today those usually use 2D barcode scanners to detect and record products on conveyor belts. More recent systems use traditional object classification methods – with features such as colour or type – to ensure precise identification of features. These methods are not robust when in uncontrolled environments, where different light and geometry conditions occur.’

Fischer said this can be counteracted by adding AI to the system. ‘With the latest AI technology, it is possible to detect products without barcodes... and scale up the product portfolio easily on-the-fly,’ he said. ‘The challenge when building such a system is to combine the best of AI and lean embedded vision systems.’ Basler designed a proof-of-concept to show the benefits and possibilities of this technology and demonstrated it at Embedded World.

The Brickstream people counting sensor has an employee filtering solution. Credit: Flir

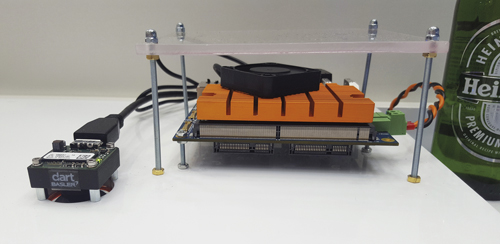

With the new technology, consumers in a store can select what to put in their baskets, and the trained neural network then detects the products based on a video stream. This works in a similar way to facial recognition. Finally, the total pricing is displayed. The system is based on the company’s embedded vision kit, which consists of the company’s Dart Bcon for MIPI camera module with On Semiconductor’s AR1335 sensor; the i.MX 8QuadMax system-on-chip processor from NXP; the i.MX 8QuadMax-based processing board; and the Smarc 2.0 carrier board.

On the software side there are two parts, the system software and the application software. Fischer said: ‘To make such an embedded system run smoothly, different elements of the system software must be connected to create a coherent system.’ Basler drew on its experience in different software fields to connect elements such as board support packages and NXP’s Yocto kernel, as well as the creation of a high-performance, lean, embedded system.

The application software is based on a custom convolutional neural network (CNN) by Irida labs. ‘The model used is based on the latest deep learning and edge processing techniques,’ said Fischer, ‘to provide fast and robust response in a seamless scenario. The training of the CNN takes place on the host side but the inference takes place on the edge.’

Scaling up

This solution is designed to be scalable, so products can be added easily, and the small form factor allows for different integration options. For the retail stores, Fischer said that the benefits of such a system include lower labour costs and an improved customer experience, with instant checkouts, minimised queues and 100 per cent checkout capacity at all times, even if the shop is open 24 hours a day.

Basler demonstrated an automated retail checkout terminal equipped with AI software at the Embedded World trade fair. Credit: Basler

Another area in which machine vision is beneficial to retailers is in monitoring flow through the store. Agress explained: ‘Look at the type of data that an online e-commerce website that sells retail goods has – it has so much more data about their customer journey than the retailer has.’ Consider shopping with Amazon, which gives recommendations for products you might like to buy based on your shopping and browsing history. Data on how long you spent on a page, what path you took to get to the shopping cart, and whether the cart was abandoned at the checkout, is all analysed.

This is the type of information that would be valuable for a bricks-and-mortar retailer. Agress believes that, in order for the physical retailers to compete, it is important for them to understand their customer’s path and journey or buying experience. Flir would use a stereo-based people-tracking camera to generate this type of data. ‘A lot of times we’ll see customers [retailers] do small area tracking, or in some cases full store tracking. They’re hoping to understand what the flow is, and whether the store layout is correct,’ Agress said.

Flir partnered with a large electronics retailer, with branches in the US and Canada, to help them re-design their stores. Agress explained: ‘Every store always had the same layout. They did this for familiarity, so that if you knew where to go in your local store, you could easily find the products in another store.’

Over time, the retailer wanted to make a change, but didn’t know what to change to, so they came up with four different layouts and invested in full-store tracking in these four stores. There was a control store, with the standard layout, and three alternative versions. ‘In conjunction with the type of data we provided, which were our qualitative metrics such as browse time in each department,’ said Agress, ‘this data was then merged with their transactional data to understand what the actual sales were in these stores. Then, looking at flow maps, which show where the hot spots are in the store, they were able to understand where people were gathering, how the flow of traffic moved throughout the store and how the customer journey looks, visually.’

Basler's proof-of-concept was designed to show the benefits and possibilities of this new technology. Credit: Basler

The retailer then used this data to decide on the most effective layout to use across its hundreds of stores.

‘I think that’s another good use case of where you’re using machine vision,’ Agress said. ‘You’re using these types of analysis to understand the flow of people through the store, much like Amazon would increase and optimise how to design its website by looking at similar data. So, the IT and operational organisations in bricks-and-mortar retailers can now have similar datasets to the online e-commerce world.’