The recent Mobile World Congress (MWC), the annual showcase for the newest model smartphones and other mobile devices, reinforced the most significant trend in how this massive consumer product sector is evolving.

Driven by the TikTok/Snapchat/Instagram generation – enabled by more AI-enriched capabilities – and shaped by generational shifts in how humans communicate, the camera has become the centrepiece of the modern mobile device.

Eye-catching industrial designs; bigger, brighter displays and higher-bandwidth 5G connectivity all take a backseat in the relentless quest to capture, manipulate and share visual information more effectively.

Undeniably, the camera is the lead feature in every new flagship model introduction. It is clear that the prophecy that has been percolating for several years has taken hold in a very real way: “the camera is the new keyboard”.

All of which makes the pace and type of camera innovations in mobile devices even more critical. Yet the not-so-talked-about secret of the industry is that we have been relying on concepts that are more than 100 years old to drive improvements in each new generation of device. Yes, there have been steady and impressive advancements in adding multiple cameras and sensors to phones, as well as incrementally higher pixel counts to improve still picture clarity, improvements in low-light performance and HDR, better autofocus features, and computational photography to improve depth of field and colour reproduction. The industry has not stood still in this regard, and today’s newest models are getting closer to rivalling expensive traditional cameras in terms of quality and performance. But nagging concerns about the potential of smaller sensors and a reliance on software correction techniques still keep smartphone cameras from being in the same class as pro models.

Underlying this is the fact that most, if not all, enhancements rely on techniques pioneered by Eadweard Muybridge in the 1880s and that trace their roots back as far as Leonardo Da Vinci’s camera obscura. Frame-based photography – a means to capture static images – is inextricably embedded in the camera mindset and continues to serve us well. But it was never meant to address some of the challenges introduced by today’s ultra-mobile use of photography, which demands new levels of capability to efficiently capture motion and operate in challenging lighting conditions. In frame-based image capture, an entire image (i.e. the light intensity at each pixel) is recorded at a pre-assigned interval, known as the frame rate. While this works well in representing the ‘real world’ when displayed on a screen, recording the entire image at every time increment oversamples all the parts of the image that have not changed.

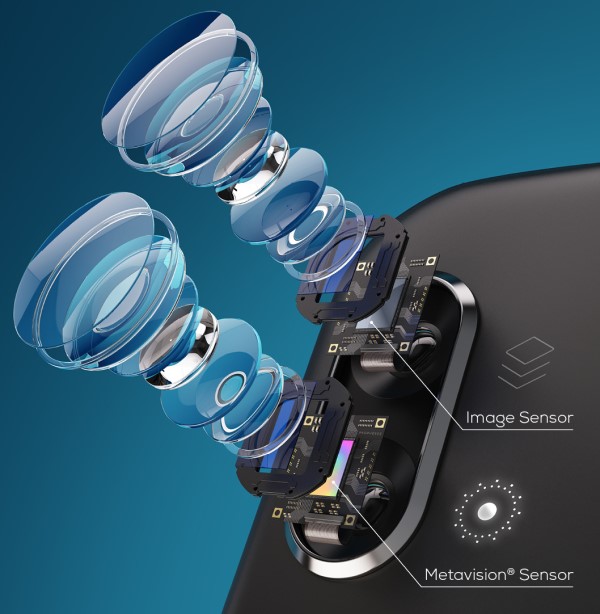

Prophesee’s Metavision sensor has been optimised for use with Qualcomm’s Snapdragon mobile platforms to enhance smartphone photography

In other words, because these traditional camera techniques capture everything going on in a scene all the time, they create far too much unnecessary data – up to 90% or more in many applications. This taxes computing resources and complicates data analysis. On top of that, motion blur can occur because of the relatively open-and-shut exposure times with frame-based cameras that causes them to ‘miss’ things between frames.

The fundamental nature of frame-based photography as a way to capture images therefore prevents it from being able to operate effectively in certain scenes and for specific needs in a way that represents how humans actually see the world. Therein lies a key to how the technology can evolve – the human eye samples changes at up to 1,000 times per second, however does not record the primarily static background at such

high frequencies.

Bio-inspired event cameras emerge as a viable solution

Over the past several years, event cameras that leverage neuromorphic techniques to operate more like the human eye and brain have gained a strong foothold in machine vision applications in industrial automation, robotics, automotive and other areas – all applications where better performance in dynamic scenes, capturing fast-moving subjects and operating in low light conditions are critical (Read ‘Why you will be seeing much more from event cameras’ in IMVE Feb/Mar 2023). Now, thanks to improvements in size, power and cost, event-based sensors have emerged as a viable option to complement frame-based methods in more consumer-facing products. These products have a different set of constraints and feature needs, including smartphones, but also in wearables such as AR headsets.

A quick primer on event-based vision: Instead of capturing individual frames at a fixed rate, event cameras detect individual pixel change in brightness – which we call events – through intelligent pixels, and process these as they occur. This means they can capture motions with a much higher temporal resolution and lower latency – and with less data processed (meaning less power consumption) than traditional cameras. It’s the way our eyes and brains – the most efficient vision sensing systems of them all – work to process scenes.

Event-based vision addresses a fundamental limitation of traditional camera techniques: how light is captured. In a camera, the sensor needs to open to capture light. The lower the light, the longer it has to stay open. But the world does not stop when you take a picture and the motion information happening while the sensor is open generates motion blur that cannot be fixed properly today. And faster frame rates or shutter speeds actually worsen low-light performance for conventional cameras. Software-based computational photography techniques have helped modify this problem, but artefacts still remain, and blurring occurs even in the most high-end cameras.

The practical result of applying this biological technique is the ability to capture fast-moving objects with much greater clarity and detail. And, importantly, they don’t suffer from motion blur. By combining an event-based sensor with a frame-based system, effective deblurring can be achieved, making photography and video capture more precise and lifelike.

Inherently, event cameras are well suited to power-sensitive and bandwidth-constrained technologies such as mobile phones. Because data is captured only when the scene changes, independent of a conventional camera’s frame rate setting, they can provide power savings and higher bandwidth, which a mobile phone can use to actively monitor the scene. While not meant to be a standalone alternative, by working in tandem with frame-based cameras, data from event sensors can be combined with that generated by frame-based cameras to effectively deblur images.

MWC 2023 was a breakout moment for this trend, and the consumer market has taken notice. As Robert Triggs at Android Authority says: “Farewell, blurry pet photos, unusable sports action shots, and smudged low-light snaps.” He was referencing the fact that my company announced a relationship with Qualcomm to enable native compatibility of our event-based sensors and software with its widely-used Snapdragon platform. This follows our on-going partnership with Sony, the world’s leading supplier of CMOS sensors to the smartphone market, with whom we have collaborated to make the size and power of event sensors more suitable to mobile applications.

Having two such critical suppliers to the consumer market segment aligned around event-based vision as a pathway to improved mobile photography experiences is a significant step forward. While the immediate impact will be first seen in deblurring and better low-light performance, this new sensing modality opens the door to a wide range of new use cases in mobile devices and wearables.