Google’s driverless cars might be a vision of what we’ll all be driving (or not driving as the case may be) in the future – but, for now, the discerning car owner will have to make do with sensors that merely assist in driving rather than doing it all for them. Google’s cars use a laser range finder, as well as video cameras and radar sensors, to pilot the vehicle. The same laser range finding or time-of-flight (TOF) technology is being developed and tested by many auto manufacturers as one of the potential sensing techniques for driver assistance. Toyota’s Central R&D Labs in Japan, in particular, has developed a prototype TOF sensor with a 100m range that could be used for obstacle avoidance, pedestrian detection, and lane detection.

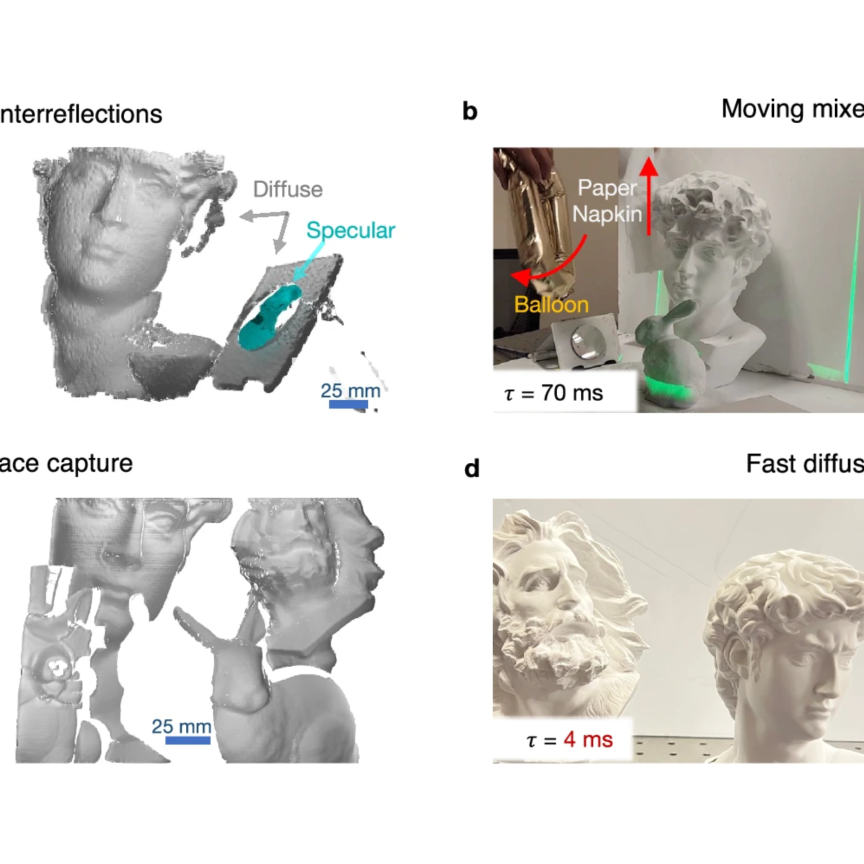

Time-of-flight is a 3D imaging technique that creates a depth map of a scene. It works by illuminating the scene, capturing the light reflected from objects, and measuring the round-trip time, the time-of-flight, which is proportional to the distance. In this way, each pixel provides a measurement of distance.

These sensors won’t find their way into cars for another few years – Jochen Penne, director of business development at Pmd Technologies, suggests five years due to the length of the innovation cycle in the automotive sector – but there are TOF cameras already in use in industry, albeit to a limited extent compared to other 3D techniques like laser triangulation.

Pmd Technologies is a fabless IC-company offering TOF depth sensing technology. It addresses three main markets with its sensors: automotive, industrial, and consumer. In the industrial sector, it has been active for the last seven years and its partner Ifm Electronic supplies a 3D camera based on its sensor.

Within industry, there is a definite set of applications where time-of-flight is suitable, which basically covers locating large objects quickly. Calculating the dimensions of pallets, parcels and boxes in logistics is one area where the technology can be used, and there is scope for its use in robotic bin picking.

‘Time-of-flight is not a technique for ultra-high precision 3D imaging,’ notes Ritchie Logan, VP of business development at Scottish TOF company Odos Imaging, adding that applications requiring sub-millimetre precision over short distances are better solved using triangulation or structured light techniques. ‘The natural scale for time-of-flight imaging is over the range of one to 20 metres, with a precision of around 1cm,’ he says.

Odos Imaging’s TOF system has a resolution of 1.3 megapixels, which makes it more suitable for industrial vision. Mark Williamson, director of corporate development at Stemmer Imaging, comments that one of the issues with TOF historically has been the low resolution, with most cameras running sub-VGA (200 x 300 pixels).

‘High-resolution is important in 3D imaging, not just for the ability to see fine detail, but also because the effective 3D precision depends on both the image sensor resolution and the precision of the distance measurement,’ says Logan. ‘High resolution at the sensor corresponds to high-precision 3D.’

Each pixel on Pmd Technologies’ time-of-flight sensor has circuitry to suppress background illumination, to make it more robust to sunlight. Credit: Pmd Technologies

Odos’ sensor has a relatively simple pixel design, meaning the company can scale it to higher resolutions, while placing the majority of processing within the digital domain. The use of a simple pixel design also provides a dual benefit, in that each pixel can act to capture distance or intensity. In effect, the system can provide both data types, acting as a conventional camera during one frame before capturing 3D data during the next, says Logan.

Operating outdoors

One of the criticisms levelled at time-of-flight imaging is that it is overly affected by ambient light. In a presentation at the Image Sensors conference (IS2013) in March, Jim Lewis, CEO of Swiss TOF company Mesa Imaging, set out what he considered were some of the myths surrounding what time-of-flight technology can achieve, one of which concerned its ability to operate outdoors. He said the technology needed improvement for outdoor applications, where direct sunlight, longer operating ranges, variable scenes, and bad weather conditions all make acquiring accurate 3D data difficult.

The challenge for time-of-flight technology, according to Logan, is the requirement to illuminate the entire scene. ‘In essence, the system is capturing a 3D snapshot of a scene in a short space of time with a single pulse of light, which is difficult,’ he says. ‘More light out effectively means a better reflection from the object, which in turn gives a more precise distance measurement.’

One way to increase the amount of light into the scene is to narrow the field of view, says Logan. ‘Illuminating over a wide field of view of, say, 60° and trying to do that over 20 metres would either require very high levels of illumination or suffer from reduced precision. Narrowing the field of view to 25° allows you to measure over distances approaching 20 metres; it gives you a much better chance of getting light back to the sensor.’

Odos Imaging currently uses laser diodes to illuminate the scene rather than LEDs, although Logan says there have been encouraging developments in LED technology which can be applied to time-of-flight systems. The short laser pulses mean Odos’ camera shutter is only open for a brief period and therefore the system is less affected by ambient light. Logan states that the system can operate even in bright sunlight.

The accuracy of the system also depends on how well the object reflects the light emitted and some objects will reflect less well than others – human hair is very specular, for instance, and reflects light poorly.

Pmd’s sensor technology incorporates what it refers to as suppression of background illumination (SBI) circuitry to make it more robust to sunlight. Its TOF camera technology works by emitting intensity-modulated infrared light, with an LED or laser, modulated at up to 30MHz or more. The pixels receive both intensity-modulated IR light and ambient light, with the ambient light having a constant intensity. The SBI is an in-pixel circuitry that determines the amount of photoelectrons generated from ambient light during the exposure, and which, if they exceed a certain threshold, removes those photoelectrons. ‘The ambient light suppression is not a feature of post-processing, but occurs within the pixel during the measurement,’ says Penne at Pmd. ‘Standard pixels collect photoelectrons and output an RGB value, but our pixels constantly monitor this process.’ SBI ensures that only the photoelectrons from intensity-modulated light are detected by the pixels.

Penne says that the SBI feature of Pmd’s TOF sensors mean the chips can really address the automotive sector, where robustness to sunlight is hugely important. Pmd is jointly developing and marketing its chip products with Infineon Technologies. Penne also states that the company’s depth sensing reference designs are small enough for integration into consumer devices.

‘In the consumer space, there are a lot of external devices like Microsoft’s Kinect or Leap Motion, which are standalone products, but Pmd-based depth sensors are small enough to be integrated into electronic equipment,’ Penne says, adding that laptops or tablets could have built-in touchless gesture control in the future. Pmd’s smallest reference module, the CamBoard Pico S, has a height of 6.6mm, which, Penne says, ‘is thin enough to be integrated into laptops’.

Penne comments that Pmd’s chips will be ready for mass-volume production by Infineon in 2014. The sensors for the consumer market will have resolutions of 160 x 120 pixels or 352 x 288 pixels.

In contrast to other gesture control technologies, such as structured light, which Microsoft’s Kinect uses, TOF measures depth information directly with no additional post processing required. This means the technique has a very low latency. ‘The chip data is accurate enough for a user to control a mouse pointer on an HD screen with gestures,’ comments Penne.

Instant depth measurements

The advantage with time-of-flight technology is its ease of use. ‘Time-of-flight produces a full 3D image in one snapshot,’ comments Mats Wedell, sales director at Swedish company Fotonic. Fotonic manufactures TOF cameras based on TOF technology from Panasonic Eco Solutions. ‘The image is taken within approximately 2 to 6ms and contains 20,000 measurement points,’ Wedell adds.

Pmd Technologies’ CamBoard time-of-flight sensor is small enough to be integrated into laptops, the company claims, for built-in gesture control. Credit: Pmd Technologies

Fotonic’s TOF imager has resolutions of 160 x 120 pixels or 320 x 120 pixels and is equipped with Panasonic’s background subtraction technology, enabling it to operate in bright sunlight up to 100k lux. Spanish company, Robotnik, has installed the company’s TOF cameras on some of its mobile robots for collision avoidance and navigation.

‘Time-of-flight is effectively a plug-and-play installation, and requires no post-processing,’ comments Logan, adding that the system simply outputs a 3D depth map. This is in contrast to stereo imaging, which is extremely processing-intensive, and laser triangulation, which requires careful calibration. ‘As long as sufficient light is reflected back, a time-of-flight system provides a valid depth map,’ he says.

Logan feels time-of-flight imaging has still to fully mature as a technology. However, he says there are significant integration activities within the industrial automation sector.

The technique might not have the accuracy of other 3D imaging technologies like laser triangulation or pattern projection – LMI’s recently launched Gocator 3 series, which operates via pattern projection, provides accuracy in the micrometre range and is ideal for production line inspection – but it is a fast and easy way to capture 3D data.

‘The industrial market, and particularly the machine vision sector, is a natural home for time-of-flight technology, because the market is driven by increasing productivity, which is often achieved through greater automation,’ comments Logan. He also adds that the availability of open libraries for Microsoft’s Kinect sensor has helped proliferation of activities surrounding the technology, by acting as a low-cost vehicle to experiment with new applications and uses. ‘This is a great enabler for the industrial 3D imaging sector,’ he says. Both Odos’ TOF cameras, as well as those from Mesa, are supported by Spanish company Aqsense’s 3D imaging software.

The cost of the cameras will also need to come down for increased uptake of the technology, especially in industry. ‘I believe that, at higher volumes, time-of-flight cameras will cost less than €1,000 within a couple of years,’ says Wedell at Fotonic.

With interest from the automotive and consumer sectors, time-of-flight imaging technology looks set to develop a great deal in the coming years. In the industrial sector, it is already finding its place. Logan comments: ‘We are continuously surprised at the breadth and depth of variety of uses across all sectors for the technology. Time-of-flight imaging represents a huge opportunity for a big step forward in automated vision applications.

A team from Heriot-Watt University has developed a time-of-flight system that can capture high-resolution 3D images, precise to the millimetre, from up to a kilometre away. The system can also detect laser pulses from ‘uncooperative’ objects that do not easily reflect the laser light, such as fabric.

The primary use of the system is likely to be scanning static, human-made objects, such as vehicles. With some modifications to the image-processing software, it could also determine their speed and direction.

The system uses photon-counting detectors to measure the returning light. Aongus McCarthy, a research fellow at Heriot-Watt University, says: ‘Our approach gives a low-power route to the depth imaging of ordinary, small targets at very long range.

‘While it is possible that other depth-ranging techniques will match or out-perform some characteristics of these measurements, this single-photon counting approach gives a unique trade-off between depth resolution, range, data-acquisition time and laser-power levels.’

The work was led by Gerald Buller and the findings were published in Optical Society of America’s (OSA) open-access journal Optics Express.