Having an X-ray taken is one of the more standard medical examinations. Analogue-ray films are generally what people think of, although there is a shift towards digital X-ray imaging to provide higher quality images requiring lower X-ray doses. Hakan Sakman, CTO of Swiss vision company CMOSVision, explains that the higher image quality afforded by digital X-ray cameras gives doctors a better understanding of the changes in the bone structure of the patient. CMOSVision manufactures the CMV-XRE camera, a low-noise, 16-bit digital X-ray camera.

Measuring changes in bone density over time for diseases like osteoporosis benefit from higher resolution images, as does soft tissue radiography. ‘Soft tissue radiography is a very delicate process requiring low-noise images,’ says Sakman. ‘In addition, using less sensitive cameras means the X-ray dose needs to be higher, which is harmful for the patient. The CMV-XRE camera is sensitive to low dose X-rays and captures low-noise, accurate images.’

As well as higher quality images, digital images can be processed on a PC, as Sakman points out: ‘Instead of doctors making decisions simply by looking at the films, now quantitative data can be produced through running different algorithms on the images to see how a patient’s bone thickness or condition has changed over time.’

Radiotherapy makes use of the ionising property of X-ray radiation in order to kill cancer cells. Modern radiotherapy systems have a diaphragm that creates a very specific shape by adjusting a 2D array of pins. These pins are moved in and out to create the profile shape of the tumour, so that only that region is irradiated. Visible cameras within the machine are used to monitor the position of the pins and ensure the X-ray beam is shaped correctly.

Historically, radiotherapy systems use a charged injection device (CID), often called a Cidtec, as the image sensor, which is less susceptible to radiation. However, according to Mark Williamson, sales and marketing director at Stemmer Imaging, these imagers are limited in resolution (standard TV resolution), are an old technology, and are expensive, because they are really only used in these applications.

Networked systems

CMOS technology using five or six transistor (5T or 6T) CMOS pixel design can provide radiation-hardened cameras, comparable to CID technology in terms of robustness to radiation. ‘Standard CCD or CMOS cameras [with three or four transistors per pixel] do not survive in this environment,’ says Williamson.

‘There is now a trend in these applications that imaging can start to move to higher Megapixel resolutions using 5T devices as opposed to Cidtecs, which is low resolution,’ Williamson continues, adding that 5T cameras are also less expensive than CID technology – Cidtecs cost around £5,000-£6,000 whereas a 1.5 Megapixel 5T camera is around £1,000-£2,000. There is also the advantage of a digital interface, as opposed to the analogue signal Cidtecs use.

‘Tests have been carried out in radiotherapy applications indicating that 5T CMOS sensors are more immune to radiation than standard [CMOS/CCD] sensors,’ Williamson says. Dalsa’s CMOS technology, for instance, including its Genie HM range and Falcon range, is 5T.

One of the advantages with moving from analogue to digital in areas like X-ray imaging is that the images can be stored easily on PCs. George Chamberlain, president of Pleora Technology, comments that using Ethernet as a connectivity solution allows a network video architecture to be set up, which has recently been enabled in a standards-compliant way with the latest version of the GigE Vision standard (1.2).

In the medical industry, many of the applications using Pleora’s networked video connectivity solutions involve the digitisation of X-ray imaging systems. Real-time imaging networks can be implemented using Ethernet, which improves the efficiency of procedures like image-assisted surgery. ‘These networks simultaneously multicast patient scans in real-time to displays in operating rooms and observation areas, to processing PCs, and to storage facilities,’ says Chamberlain.

Pleora’s vDisplay IP engine allows video to be displayed directly on monitors, without using a PC to capture it first. This provides ultra-low latency images and, because the vDisplay can be built into the system, it means images can be shown at higher resolutions than with a standard DVI or HDMI monitor, which is important in a surgical procedure where subtle features must be differentiated.

According to Chamberlain, the medical industry requires high reliability of image transfer for two reasons: the first is legislative. ‘Many jurisdictions legislate that when a patient is exposed to an X-ray dose that each dose has to be used for imaging. You’re not allowed to discard an image if it wasn’t captured correctly,’ he says.

The second reason Chamberlain identifies is for applications like image-assisted surgery, in which the surgeon is relying on the imaging system during the operation – using X-rays to aid in the positioning of an implant, for example. ‘If the imaging system fails, the surgeon might have to revert to older techniques where imaging is performed in non-real-time in another part of the hospital,’ he says. Therefore, in these instances, reliability is paramount.

3D diagnostics

Moving away from X-ray imaging to using 3D imaging as a diagnostic tool, researchers at a major medical centre have commissioned 3D scanner company, threeRivers 3D, to develop a 3D custom hand scanner for monitoring rheumatoid arthritis. The scanner provides a repeatable, fast and accurate way to measure the volume and hence how inflamed the joints are, so that doctors can determine the efficacy of anti-inflammatory medication. The centre is currently conducting a study using thousands of individual scan sets.

3D scanners from threeRivers 3D are being used to measure the volume and hence how inflamed the hand joints are in rheumatoid arthritis patients.

Rheumatoid arthritis is typically monitored by a doctor physically examining the patient’s joints to determine the level of swelling. ‘The method is very subjective and not an ideal way to determine if inflammation is getting better or worse,’ comments Mike Formica, president of threeRivers 3D.

The device scans the patient’s hand in 3D and measures the volumes of the joint. Scans can then be compared over time to monitor any changes. ‘Swelling is one diagnostic criteria of the disease; the other is heat,’ Formica says. The system also includes a thermal camera to capture a heat signature of the joint, so both volume and heat changes can be monitored over time.

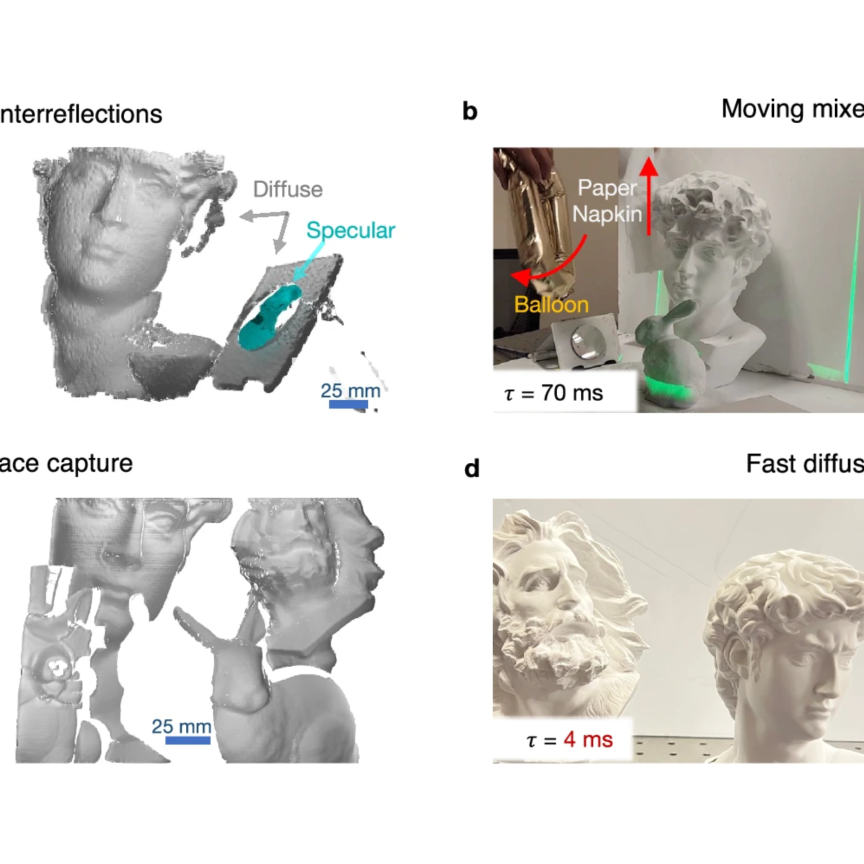

The system has to scan the patient’s hand quickly to reduce the potential for any movement in the image. In addition, image processing has to be fast and as automated as possible to handle thousands of data sets. The system incorporates two 3D scanners, one mounted on the left and the other on the right, both scanning simultaneously to provide complete coverage. A complete scan takes around 10 seconds to procure.

Formica explains that the system, in principle, would operate with the doctor establishing a baseline during the initial consultation and identifying where they want to measure by drawing a region of interest box around the different joints. For every successive consultation, these areas are automatically referenced within the software to measure the volume consistently.

‘Automation was a big part of the system; 3D data can be extremely labour-intensive to manage and these are doctors, not 3D imaging specialists,’ says Formica. ‘From a skill-set perspective, it can be operated by a nurse or doctor and after the measurement area has been designated it simply becomes a statistical problem.’

ThreeRivers 3D builds its standard 3D scanners around Prosilica GC1290C cameras from Allied Vision Technologies (AVT). One of the advantages of the AVT camera Formica identifies for this application is that it has a high dynamic range. A laser line is swept over the hand and for safety reasons the power has to be as low as possible. With the high dynamic range, the camera can pick up very low levels of laser light regardless of variations in skin tones. The camera is sensitive enough to detect the laser passing over areas of the hand that are darker or lighter due to skin discolorations without increasing laser power. In addition, the camera is Gigabit Ethernet based. Each scanner has four cameras which Formica says would be extremely challenging to implement in USB and maintain high reliability.

With a standard 3D scanner, the object is scanned and then either the object or the scanner is moved to capture another image. ‘We really couldn’t afford to do that from a time standpoint,’ Formica says. ‘Scanners pick up micron-level detail, so it doesn’t take much movement to cause an artefact in the image. This would impact on the clinical accuracy of the system. Speed became a very important characteristic, which is why we used multiple scanners integrated with each other. By changing the way the algorithms generate the data, the machine can act as one large scanner as opposed to multiple individual scanners.’

Two 3D scanners are incorporated in the system from threeRivers 3D, which scan simultaneously to provide complete coverage of the hand in 10 seconds.

According to Formica, both multi-scanner integration and simultaneous scanning are very rare. ‘I don’t believe I’ve ever seen multiple 3D laser scanners capture an object from multiple views at the same time, because typically those laser lines would interfere with each other. Because we were able to synchronise the two scanners’ behaviour, we were able to scan simultaneously, which lowered the time it takes to capture the data.’

The trial testing the accuracy of the scanners is still ongoing. Formica states: ‘Even if the system works perfectly, it may or may not have medical relevance. The goal is to determine system efficacy as well as whether it is a good way to monitor the progression of the disease.’

Gamma-ray imaging

Scientists at the University of Arizona have developed high resolution CCD-based gamma ray detectors, which they’re using to study neurological pathologies, including Alzheimer’s and Parkinson’s disease, in rodent brains. The BazookaSPECT detector technology uses CCD Dragonfly Express cameras from Point Grey and has an imaging resolution of less than 100μm, an order of magnitude higher than conventional scintillation-based gamma ray cameras.

The detector technology holds much promise for small-animal SPECT (single photon emission computed tomography). It allows non-invasive methods of visualising the effect different drugs will have on a disease, for instance, through using radioactive markers. The higher resolution detectors can amplify low gamma ray levels and provide greater detail in the images, important for small-animal studies.

Researchers at Eindhoven University of Technology (TU/e) are using image processing tools based on the human visual system to quantify the alignment of heart tissue fibres in microscope images. The aim is to determine the suitability of tissue-engineered heart valves for transplant into children with heart defects. Currently, artificial heart valves are used, although research into using tissue-engineered heart valves as a superior alternative is ongoing.

Human visual system-based algorithms developed by Dutch company Inviso were used to quantify the level of coherence of engineered heart fibres. Engineered tissue has to have the same mechanical properties as normal heart tissue, which can be determined by looking at the collagen fibres within the tissue. Microscope images are typically only qualitatively analysed.

Dr Frans Kanters, founder of Inviso, comments: ‘In our group at TU/e we took these images of heart fibres and, using human visual system-based algorithms, which are very good at identifying orientations in crossing structures, we tried to quantify the coherence in the fibres. The data was compared with qualitative inspections carried out by experts in the field. The quantitative analysis showed a similar trend in the amount of coherence in the fibres to that conducted qualitatively, only with a quantitative number.’

One of the major problems in image analysis of line detection is crossing structures. The classic computational approach to measuring transecting lines is to enhance one line and suppress the other. ‘This would not provide a quantitative answer on the alignment of collagen fibres, as some lines would be suppressed,’ explains Kanters.

Inviso’s algorithm looks at the orientations of lines within a certain area of the image and creates a three-dimensional space, in which the line orientation is extracted as the third dimension. ‘The algorithm can identify that crossing structures are split into separate planes because they have different orientations – the lines can be enhanced without losing information about crossing structures,’ Kanters says. The program will provide the level of coherence in a certain region, due to the number of lines facing in the same direction.