Relentlessly, robots are replacing humans, as factories around the world step up their investment in automation. Yet, as delegates to the International Conference for Vision Guided Robotics (ICVGR) heard in November, one application – bin-picking – still seems to be more difficult for non-humans.

International agreement to standardise the protocols used for 3D vision data will help (see page 10), as will very high bandwidth standards for wireless data transmission – both of which should be achieved within the next 12 months. However, although the technology used for bin-picking has become more sophisticated and advanced in recent years, it is not yet widely employed. ‘Currently there aren’t a lot of robotic bin-picking applications installed in industry,’ explained David Bruce, senior engineer at robot manufacturer Fanuc.

The difficulty is that each bin-picking application poses its own challenges. Heiko Eisele, president of MVTec Software, recalls a demonstration at the ICVGR conference in Arizona saying that, for bin-picking, there is no ‘one solution to fit all’. He recounted how: ‘One of the presenters was holding up a clothing hanger and it became tangled very easily – this is an example of an application that would represent a difficult task in terms of bin-picking.’

According to Bruce, the main challenge in implementing imaging technology into a bin-picking system is the generation of a high-quality 3D point cloud that can be analysed to look for parts. He pointed out: ‘It’s more of a challenge than a 2D image. You have to analyse a 3D scene, which is more intensive than analysing a 2D scene, to find what you’re looking for.’

MVTec produces software for machine vision and Eisele explained: ‘We provide the algorithms that allow you to recognise objects in 3D space, based on images that have been taken by either one standard machine vision camera, multiple cameras, or a 3D sensor. This technology would be integrated into a bin-picking application; the software interprets the data from the sensor and provides the robot with coordinates so it knows where the part is in space that needs to be picked.’

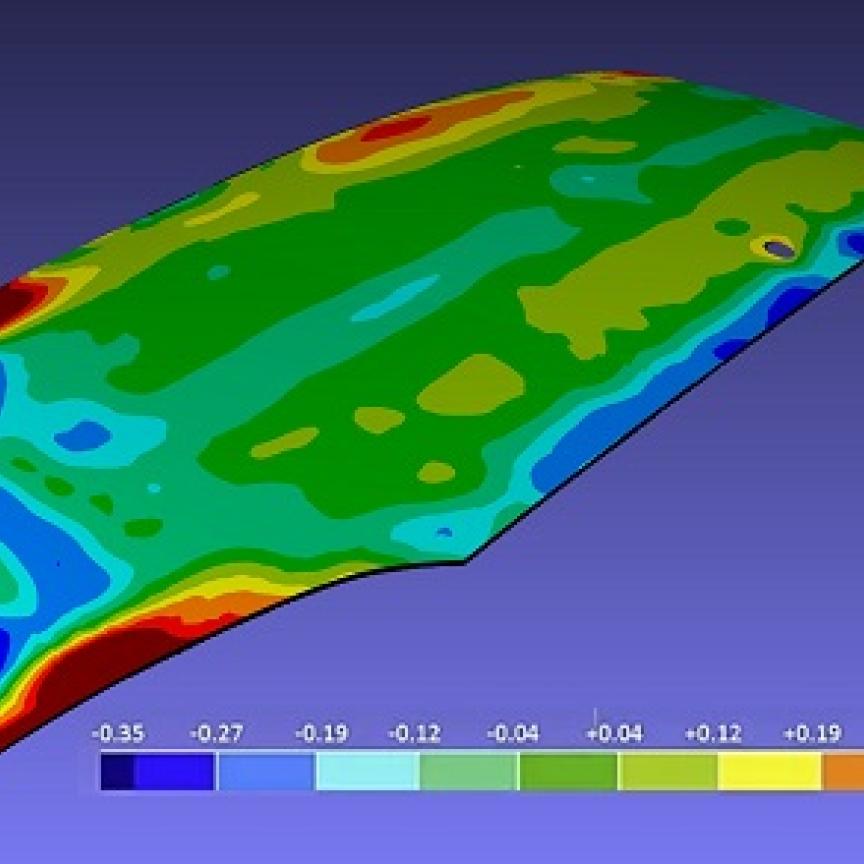

The variation in parts makes bin-picking particularly challenging; each application often has to be looked at individually to design a robotic system for the task. Credit: Fanuc

But for bin-picking to find a wider range of applications, it is not the software that is the challenge, Eisele believes. He continued: ‘I do not think the difficulty lies so much on the sensor or algorithm side. I believe that a lot is possible with the current sensor technology and software to extract the 3D positions of objects in space.’ For Eisele, the challenge is exemplified by that tangle of clothes hangers held up at the ICVGR conference: ‘I think the main challenge lies in the overall application, and that every application needs to be looked at one-at-a-time. If you take the hanger example, this is probably a task that would be very hard to achieve.’

The geometry of the part carries its own challenges. ‘There is a difference between bin-picking in the automotive industry, where you are dealing with fairly large components, as compared to an application using an assembly line,’ said Sam Lopez, director of sales and marketing for Matrox Imaging. Eisele agreed that simple shapes are easier for the machine vision software to process: ‘If you have smooth surfaces, it is easier for the software because it has good data and coordinates to work with.’ The technology needs to be able to recognise a variety of shaped parts if bin-picking is to be used in additional applications. ‘Today, this technology works well and is very robust for simple geometries. But there needs to be more intelligence in the algorithms so that you can deal with more complex geometries,’ said Lopez. Adil Shafi, president of Advenovation, which specialises in robotic bin-picking solutions and vision guidance, agreed that an important area of focus to push bin-picking further into industry is the recognition of more difficult shaped parts: ‘A lot of the initial successes have been with some easier bin-picking applications. Advenovation are focusing on tougher geometries now.’

Moreover, the environment in which bin-picking technology has to operate and the materials used can cause their own problems. The use of oil in the automotive industry, for example, can generate a high level of reflectivity and disrupt the imaging equipment. ‘So, you need to have very robust algorithms that are capable of dealing with dirt, reflections, and obscurities. In our software algorithms, we’ve built in a certain amount of robustness that is immune to lighting and scale variations,’ Lopez continued. In addition, factors such as ambient lighting in a factory setting can affect the technology.

Picking randomly orientated parts requires robust 3D imaging to guide the robot gripper. Credit: Fanuc

‘At certain times of the day, the sun could be shining through a window at exactly the right angle that reflects off of all of the objects in the bin, which throws off the camera completely,’ he said. As software developers learn how to overcome these difficulties and thus improve the technology, bin-picking will be employed in a wider range of industrial settings. ‘As we were developing more and more applications, there were challenges that we learned from. We included improvements in the next revision of the software that made it more robust than the previous release,’ said Lopez. ‘So, with every release, you’re building up on that experience and making improvements to the algorithms.’ Because it is impossible to create one solution to fit all, it is necessary to understand the challenges within each bin-picking practice, and combine approaches to push the technology forwards, explained Shafi. ‘One of the key points that I made [at the ICVGR conference] is to ensure that people understand that there are several levels of bin-picking: there are overlapping parts; parts that change shape; parts that have features; and changing features. There isn’t one magic solution that can solve everything. You have a collection of algorithms and techniques that need to come together.’

Standards for 3D vision

The standardisation of 3D data transmission will allow bin-picking technology to be more easily applied in industry. Among the current 3D cameras on the market, the protocols used to send 3D data are proprietary to each camera manufacturer. ‘This poses a technical barrier in adopting 3D cameras because a different code has to be written to handle each manufacturer,’ said John Phillips, marketing manager at Pleora Technologies. The transition towards a standard protocol for transmitting 3D data is in sight, according to Phillips: ‘This is a problem that has been solved in the 2D world with GigE Vision, USB3 Vision and GenICam standards. 3D was a topic of discussion during the International Machine Vision Standards Meeting that occurred in Germany in October (see page 10), and the technical committees are now taking action to come up with data formats that can be used with GigE Vision, USB3 Vision and GenICam that will standardise the 3D data transmission for all manufacturers.’

Standardisation brings flexibility and interoperability. ‘If you are involved in the system integration of a robotic arm for a bin-picking application, you will be able to choose the 3D camera from a range of manufacturers, without worrying about whether it’s going to be compatible with the software package you have chosen,’ said Phillips.

The demand for highly flexible bin-picking systems is growing as mass production becomes ever more efficient. ‘What we have had our customers demanding of us is to create systems that are more easily adaptable and changeable on a given production line,’ stated Lopez. Eisele agreed that manufacturers are requiring more adaptability: ‘I think overall there is a desire from the user side to have a more generic bin-picking solution that works for every part.’ In future production lines, it will be possible to switch products without re-tooling, and bin-picking technology will need to be able to meet this capability. ‘The robot and the pickers – “the flexible robotic cell” – will become easily adaptable and will be capable of handling many different products on the same production line,’ said Lopez.

To make this a possibility, robotics, imaging and software companies are developing their products so that they can be more easily adapted to fit different purposes. ‘In terms of the vision system, Matrox are leveraging what we know about CPU’s, FPGA technology, and about miniaturisation and integration of these technologies into compact, light and intelligent camera systems that are capable of being installed on the robot arm itself.’ This type of technology will serve as a multi-purpose system, so that it provides a camera, a processing engine, and a scanning device – all in one.

‘It will make the system lighter and smaller, so you are widening the range of applications that the same device can be used in,’ explained Lopez. ‘The same system could be used for picking in a micro-electronic business that uses small electronic components, and also in an automotive factory that uses large engine parts.’ In addition, this will allow companies to develop bin-picking technology for use in new or different applications at a faster rate. ‘It means that there is less development time because an application that was developed for a certain type of hardware can be taken and used in a different application, without having to be re-developed. So, you are shrinking the time to market,’ Lopez remarked.

A further advance is wireless data transmission. ‘Coming this year is 802.11 ac [5G Wi-Fi], which allows for a very high bandwidth for wireless applications. You will start to see this being used in commercial equipment this year,’ said Phillips.

The adoption of wireless technology in a bin-picking application is an exciting prospect, according to Phillips: ‘In a bin-picking application, if the camera has no data transmission cable, the robot will be more flexible, lighter, and clearer in its movements. You will certainly see a lot more adoption of high-speed wireless applications in 2014.