The most common form of aircraft maintenance, repair and overhaul is inspection, performed by an inspector running visual checks. Sometimes, though, inspectors do miss things. In 2015 an inadequate general visual inspection led to one of the left engine fan cowl doors of an Airbus A320, operated by Tiger Air, to drop towards the runway during takeoff. Debris lodged in the door of the left-side landing gear, damaging a proximity sensor. This produced a false indication of unlocked landing gear as the aircraft returned to the airport, resulting in a mayday call.

Thankfully no one was hurt, but it does illustrate the importance of failsafe inspection procedures. The checks are normally lengthy, based on many different sources of information – aircraft logbooks, checklists, aircraft maintenance manuals, airworthiness directives.

Aircraft with possible damage can take anywhere between 8 and 12 hours to be cleared for takeoff, if cleared at all. From the perspective of an airline, every minute an aircraft spends on the ground costs money.

Using a combination of drone, vision and machine learning technology, several firms are working to digitise and automate general visual aircraft inspections, with the aim of bringing their duration down to less than one hour. The inspections are intended to be safer, more accurate and more cost-efficient, saving airlines not only time, but also money and resources. They will enhance the overall quality of inspection reports, improve damage localisation, traceability and repeatability.

With one click, a drone could be sent on a predetermined flight path around an aircraft, navigating using lidar and capturing images with a camera and zoom lens. The images are then processed with a machine learning algorithm to pinpoint the location of any anomalies or damage to the plane. This information can then be used by engineers to make fast, informed decisions on the nature of maintenance or repair required.

One of the companies developing drone inspection tools is Mainblades. Julian von der Goltz, a computer vision software engineer at the firm, said: ‘Image gathering and damage detection technology have progressed so far that, when applied correctly, they can outperform the old ways of manual inspection. The robotics solutions are not only immune to the fatigue experienced by humans, but are also able to perform tasks with sensitivities not possessed by the human eye.’

At the end of last year the firm conducted an aircraft drone inspection in an outdoor airport environment for the first time. This capability would mean that drone inspections could be performed outside at a gate as soon as an aircraft lands, rather than having to wait for it to be towed into a hangar. ‘We are convinced with this milestone being achieved, that the industry is getting closer to a point where drones inspect and evaluate an aircraft in as little as 60 minutes, right at the apron,’ said von der Goltz.

Powered by machine learning

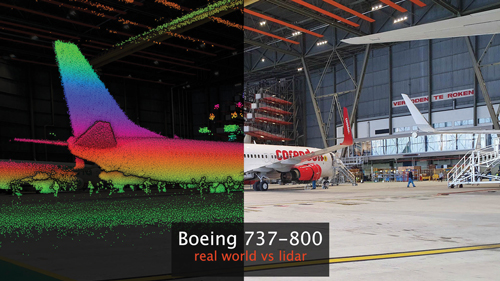

Mainblades’ drones navigate using an Ouster OS0-32 lidar module with a range of 55m, capable of capturing 655,360 points per second at a precision of ±1.5-5cm. With it the drone can navigate around an aircraft and its surrounding environment, recording factors such as altitude, speed, weather, and distance to nearby 3D structures. A simultaneous localisation and mapping algorithm processes all the accumulated data to localise the drone in the hangar environment, enabling it to fly around the aircraft safely.

A digital twin of the fuselage is created during a drone inspection to help identify damage or defects. Credit: Mainblades

To capture images of the aircraft fuselage, the drones are equipped with a Zenmuse X5S HD camera. The camera uses a micro 4/3 sensor, has a dynamic range of 12.8 stops, good signal-to-noise ratio and colour sensitivity, and is able to capture 5.2K 30fps, 4K 60fps and 4K 30fps video.

‘With the help of HD cameras and optical zoom, we are able to identify damage that is potentially very small – down to 2mm in size,’ said von der Goltz.

After being asked how other types of camera technology, in addition to 2D cameras, could be used in drone aircraft inspection, he told Imaging and Machine Vision Europe: ‘We see big potential for 3D cameras that would allow us to detect dents more reliably than with 2D cameras, and even allow us to measure the dent depth automatically. As a prerequisite, the drone would have to fly extremely stably and accurately at a level that we have not reached just yet. So this is indeed a vision for one day in the future.’

Throughout the visual inspections using the 2D HD cameras, a large amount of data is generated that needs to be processed. Mainblades is therefore turning to machine learning algorithms to decipher the recorded visual information.

‘We believe that using machine learning to identify and report on potential issues will help to address them in a much faster, easier and safer manner,’ remarked von der Goltz.

The machine learning algorithms are developed by gathering and labelling all the images taken during aircraft inspections – using the expertise of aircraft engineers – as well as their metadata (location, exposure, focal length, resolution, aircraft type, environmental conditions). Based on these labels, the machine learning models learn to perform object detection – or in this case damage detection – predicting its location and type in an image. To ensure correct predictions, this data must contain enough examples of types of damage and cover all possible situations, including being outdoors or in bad lighting.

‘We are in the process of gathering data for a collection of damage types that are consistent with the definitions in the maintenance manuals of the OEMs,’ von der Goltz explained. ‘Examples of those damage types are lightning strikes, dents, cracks and paint chippings.’

With the data that drones collect after a complete inspection, it is possible to create a digital 3D copy of the aircraft, known as a digital twin. This consists of visual information from the complete fuselage, wing and tail section of the aircraft. Once the algorithms identify any damage, they can be mapped to the digital twin and brought to the attention of aircraft engineers, offering them the chance to assess them in further detail if necessary. The digital twin can also be compared to that of an earlier inspection, to indicate any changes that have since taken place. This can also be used to highlight anomalies that may require further inspection.

Mainblades’ drone navigates using lidar and captures images of an aircraft’s fuselage using an HD camera and zoom lens

Once an airline adopts drone technology and gathers more data through automated inspection, it will gradually be able to identify maintenance patterns and predict when repairs need to be done, increasing overall efficiency.

Cutting red tape

Drone visual inspections have already captured the interest of major aerospace firms, with Airbus, EasyJet, American Airlines, Austrian Airlines and Air New Zealand having already trialled the technology.

Mainblades is now working on getting its drone technology validated by maintenance, repair and overhaul organisations for use in aircraft inspection, as well as certifying it with the EU Aviation Safety Agency, in co-operation with the Dutch civil aviation authority, for outdoor flights at an airport. The firm envisions that in one or two years, its drones will be fully certified for performing maintenance operations in controlled airspace, allowing them to work hand-in-hand with aircraft engineers to perform aircraft visual inspection.