Computer vision (CV) has been widely adopted in many Internet of Things (IoT) devices across various use cases, ranging from smart cameras and smart home appliances to smart retail, industrial applications, access control and smart doorbells. As these devices are constrained by size and are often battery powered, they need to wield highly efficient compute platforms.

One such platform is the MCU (microcontroller unit), which has low-power and low-cost characteristics, alongside CV and machine learning (ML) compute capabilities.

However, running CV on the MCU will undoubtedly increase its design complexity due to the hardware and software resource constraints of the platform.

Therefore, IoT developers need to determine how to achieve the required performance, while keeping power consumption low. In addition, they need to integrate the image signal processor (ISP) into the MCU platform, while balancing the ISP configuration and image quality.

One processor that fulfills these requirements is Arm’s Cortex-M85, which is Arm’s most powerful Cortex-M CPU to date. With vector extension of SIMD (single instruction, multiple data) 128-bit vector processing, Cortex-M85 accelerates CV alongside the overall MCU performance. For IoT developers, they can leverage Arm’s software ecosystem, ML embedded evaluation kit and guidance on how to integrate the ISP with the MCU in order to unlock CV and ML easily and quickly on the highly-efficient MCU platform.

Arm brings advanced computing to MCUs

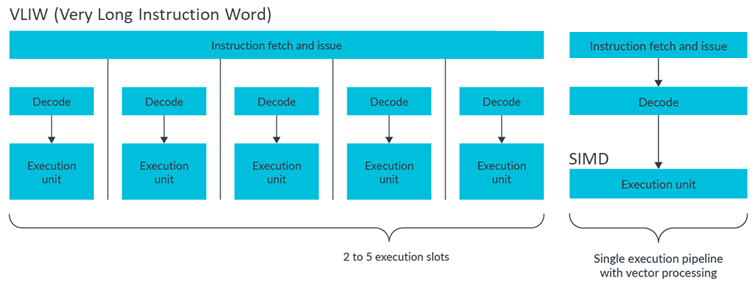

As a first step, being able to run CV compute workloads requires improved performance on the MCU. Focusing on the CPU architecture, there are several ways to enhance the MCU’s performance, including superscalar, VLIW (very long instruction word), and SIMD. For the Cortex-M85, Arm chose to adopt SIMD – which is a single instruction set that can operate multiple data – as it’s the best option for balancing performance and power consumption.

Figure 1: The comparison between VLIW and SIMD

Arm’s Helium technology, which is the M-Profile Vector Extension (MVE) for the Cortex-M processor series, brings vector processing to the MCU. Helium is an extension in the Armv8.1-M architecture to significantly enhance performance for CV and ML applications on small, low-power IoT devices. It also utilises the largest software ecosystem available to IoT developers, including optimised sample code and neural networks.

Software ecosystem on MCUs to facilitate CV and ML

Supporting the Cortex-M CPUs, Arm has published various materials to make it easier to start running CV and ML. This includes the Arm ML embedded evaluation kit.

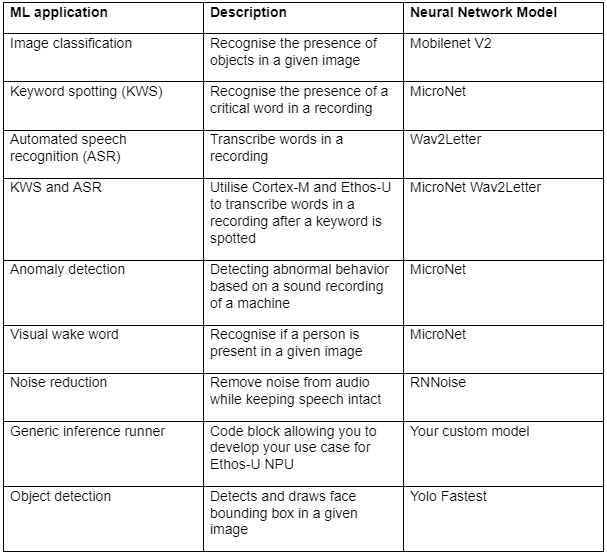

The evaluation kit provides ready-to-use ML applications for the embedded stack. As a result, IoT developers can experiment with the already-developed software use cases and then create their own applications. The example applications with ML networks are listed in the table below.

The Arm ML embedded evaluation kit

Integrating the ISP on the MCU

The ISP is an essential technology to unlock CV, as the image stream is the input source. However, there are certain points that we must consider when integrating ISP on the MCU platform.

For IoT edge devices, there will be a smaller image sensor resolution (<1-2MP; 15-30fps) and even lower frame rate. Also the image signal processing is not always active. Therefore, using a higher quality scaler within the ISP will drop the resolution to sub-VGA, which is 640 x 480, to, for example, minimise the data ingress to the NPU. This means that the ISP only uses the full resolution when needed.

ISP configurations can also affect power, area, and efficiency. Therefore, it is worth asking the following questions to save power and area.

-

Whether it’s for human vision, computer vision, or both?

-

What is the required memory bandwidth?

-

How many ISP output channels will be needed?

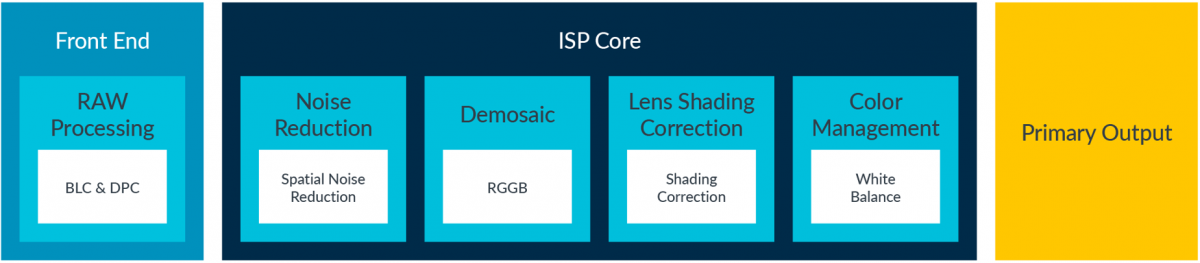

An MCU platform is usually resource-constraint with limited memory size. Integrating with an ISP requires the MCU to run the ISP driver, including the ISP’s code, data, and control LUT (loop up table). Therefore, once the ISP configuration has been decided, developers need to tailor the driver firmware accordingly, removing unused code and data to accommodate the memory limitation on the MCU platform.

Figure 2: An example of concise ISP configuration

Another consideration when integrating the ISP with the MCU is lowering the frame rate and resolution In many cases, it would be best to consider the convergence speed of the ‘3As’ – auto-exposure, auto-white balance and auto-focus. This will likely require a minimum of five to ten frames before settling. If the frame rate is too slow, it might be problematic for your use case. For example, this could mean a two to five second delay before a meaningful output can be captured and, given the short power-on window, there is a risk of missing critical events. Moreover, if the clock frequency of the image sensor is dropped too low, it is likely to introduce nasty rolling shutter artifacts.

Summary

Enabling CV and ML on MCU platforms is part of the next wave of the IoT evolution. However, the constraints of the MCU platform can increase the design complexity and difficulty. Enabling vector processing on the MCU through the Cortex-M85 and leveraging Arm’s software ecosystem can provide enough computing and reduce this design complexity. In addition, integrating a concise ISP is a sensible solution for IoT devices to speed up and unlock CV and ML tasks on low-power, highly efficient MCU platforms.