Dr Konstantin Schauwecker, CEO of Nerian Vision, describes the firm’s stereo vision sensor for fast depth perception with FPGAs

Fast and accurate three-dimensional perception is a common requirement for many applications in robotics, industrial automation, quality assurance, logistics and many other fields. Today, active 3D camera systems, which rely on emitting light in the visible or invisible spectral range, are widely used for realising such applications.

Under controlled conditions, these systems can provide very accurate measurements. In difficult lighting situations, however, they reach their limits. Depth perception using active camera systems is only possible if the emitted light can clearly outshine the ambient light. However, this is difficult to achieve in bright environments, like bright daylight. For applications such as automated logistics and mobile service robotics, where the prevailing lighting conditions often cannot be controlled, other sensors must be used. Another problem for active sensors is measuring over distance: the greater the distance, the larger the area to be illuminated.

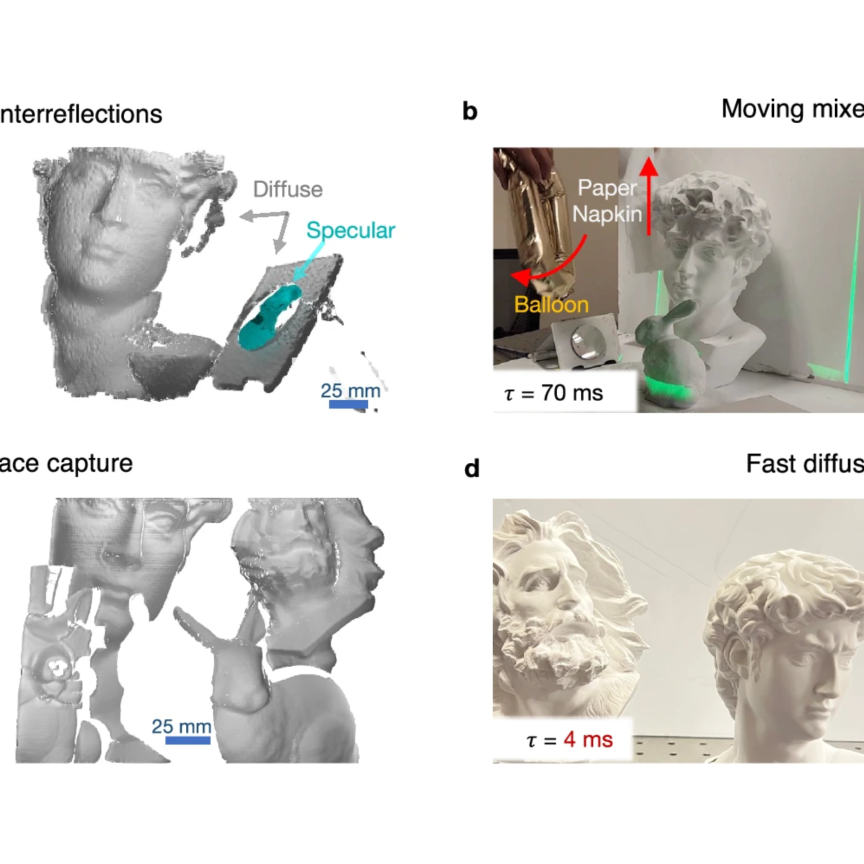

A possible alternative is passive stereo vision. The environment is captured by two or more cameras with different observation positions. Intelligent image processing can then reconstruct the spatial depth and thus the three-dimensional structure of the imaged environment. Since no light is emitted during stereo vision, the brightness of the environment is of no importance, and there is no fixed upper limit for the maximum measurable distance. Furthermore, only one image per camera is required, making stereo vision particularly suitable for dynamic applications.

Despite these advantages, stereo vision is currently rarely used in industrial applications. One of the main reasons for this is the enormous computing power required for image processing. Take two cameras with a resolution of 720 x 480 pixels and a frame rate of 30Hz. If the maximum difference between the pixel positions of two matching pixels from both camera images is limited to 100 pixels, more than one billion pixels per second have to be compared with each other.

To make things worse, if high-quality results are to be achieved, pure image comparison is not enough. Modern methods of stereo image processing rely on optimisation methods that try to find an optimal assignment of matching pixels from both camera images. This allows a drastic increase in quality to be achieved, but which also increases the computing load many times over.

If one leaves the image processing to ordinary software, then one must inevitably decide between fast processing and exact results. This can be remedied by offloading image processing to particularly powerful high-end graphics cards. However, these have a high-power consumption, which prevents them from being used in mobile systems in particular.

Nerian Vision has developed a special hardware solution for stereo image processing based on an FPGA. Mapping the image processing algorithms directly into hardware allows a massive parallelisation to be achieved, which leads to a large increase in performance compared to a purely software-based solution. FPGAs are also energy-efficient, which allows them to be used on mobile systems.

Nerian’s SceneScan 3D sensor can calculate depth data for 30 million pixels per second using the FPGA. This corresponds to a resolution of 2 megapixels at 15fps, 0.5 megapixels at 65fps, or 0.3 megapixels at 100fps. Power consumption remains less than 10W. This makes SceneScan particularly suitable for battery powered mobile systems such as mobile service or logistics robots.

Nerian hopes that with this technology, passive stereo vision will become more widely used in industrial applications. It makes stereo vision a very promising sensor technology for applications that require fast and robust 3D measurements.

Dr Konstantin Schauwecker spoke at the Embedded Vision Europe event in Stuttgart, Germany in October.

Write for us

Want to write about your experience developing and deploying an imaging system using FPGAs? Please get in touch: greg.blackman@europascience.com