A team of scientists, led by the University of Glasgow’s Optics Group, has built a 3D imager able to capture video at 5Hz through a single optical fibre.

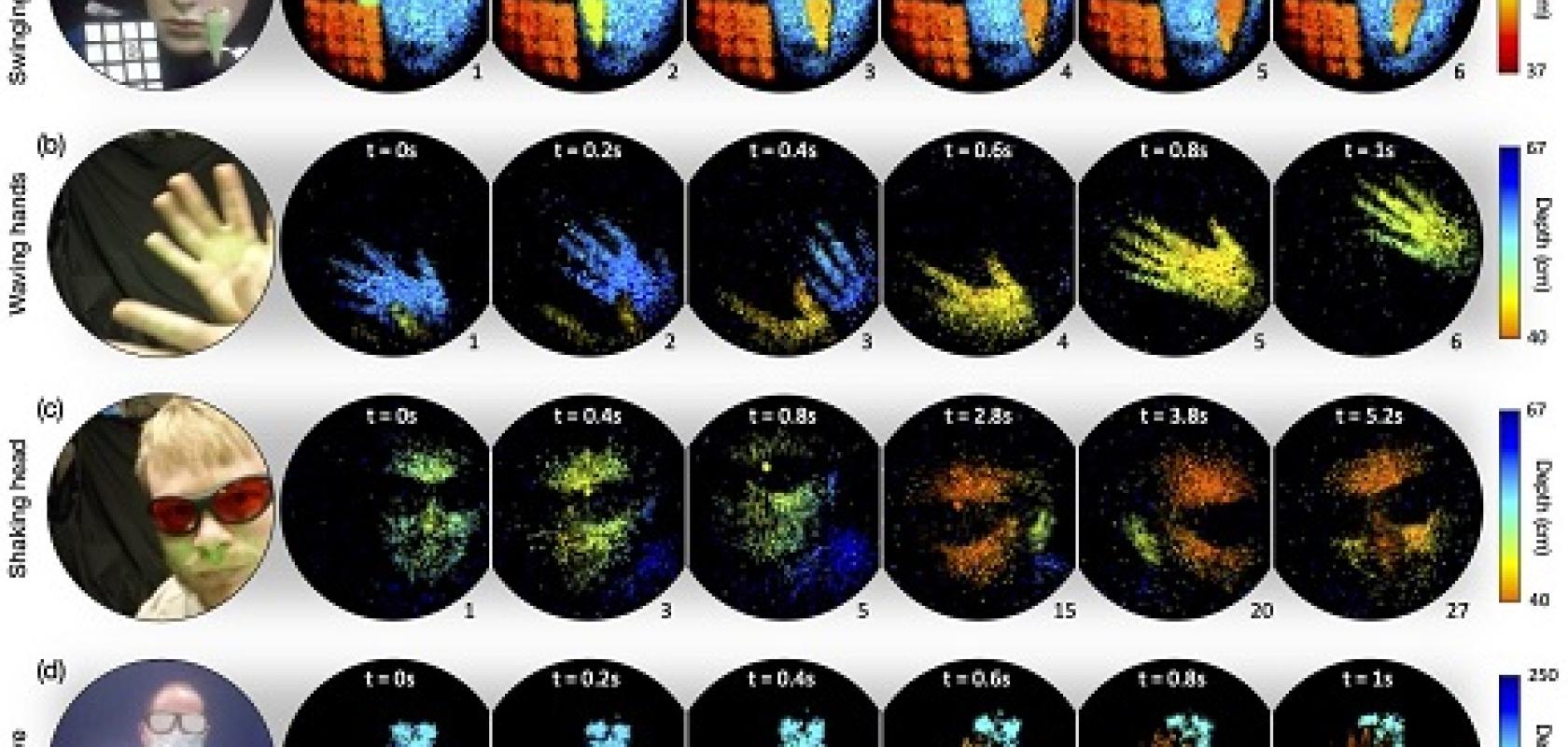

The prototype system delivers images through a 40cm long optical fibre, each frame containing up to approximately 4,000 independently resolvable features, with a depth resolution of around 5mm.

The work, which has been published in the journal Science, has applications in industrial inspection, environmental monitoring and medical imaging.

Normally, when light shines through an optical fibre, crosstalk between modes scrambles the light to make the image unrecognisable. To resolve this, the team used advanced beam shaping techniques to pattern the input laser light to the fibre to create a single spot at the output. That spot of light then scans over the scene and the system measures the intensity of the backscattered light into another fibre – giving the brightness of each pixel in the image.

By using a pulsed laser, the device also measures the time of flight of the light and hence the range of every pixel in the image. These 3D images can be recorded at distances from a few tens of millimetres to several metres away from the fibre end, with millimetric distance resolution and frame rates high enough to perceive motion at close to video quality.

Professor Miles Padgett, Royal Society Research Professor at the University of Glasgow and principal investigator for Quantic, the UK Hub for Quantum Enhanced Imaging, said: ‘In applications like endoscopy and boroscopy, imaging is traditionally achieved by using a bundle of optical fibres, one fibre for every pixel in the image, resulting in devices the thickness of a finger.

‘As an alternative, we are developing a new technique for imaging through a single fibre the width of a human hair. Our ambition is to create a new generation of single-fibre imaging devices that can produce 3D images of remote scenes.’

Currently, the multimode fibre must remain in a fixed position after calibration. Future research will look at reducing the calibration time and managing the dynamic nature of bending fibres. The team aim to work with industry to develop this research into functional technology within the next ten years.

The project is a collaboration between physicists at the University of Glasgow, University of Exeter, Fraunhofer Centre for Applied Photonics Glasgow, Leibniz Institute of Photonic Technology, Germany, and Brno University of Technology, Czech Republic.

Grain of salt

Sticking with micro-sized cameras, researchers at Princeton University and the University of Washington have developed a camera the size of a coarse grain of salt.

The device, half a millimetre wide, uses an optical system called a metasurface. The surface is studded with 1.6 million cylindrical posts, each with a unique geometry and functioning like an optical antenna.

Varying the design of each post shapes the optical wavefront, and understanding how the posts interact with light, using machine learning, produces an image.

The metasurface camera can produce full-colour images on par with a conventional compound camera lens 500,000 times larger in volume, the researchers reported in a paper in Nature Communications.

Like the fibre optic imager, the metasurface camera could be used for minimally invasive endoscopy, as well as for sensors for robots.

The researchers also say that arrays of thousands of such cameras could be used for full-scene sensing, turning surfaces into cameras.

Other metasurface lenses have suffered from image distortions, small fields of view, and limited ability to capture RGB images.

In an article for Princeton University, Ethan Tseng, a computer science PhD student at Princeton who co-led the study, said: ‘It’s been a challenge to design and configure these little nano-structures to do what you want. For this specific task of capturing large field of view RGB images, it was previously unclear how to co-design the millions of nano-structures together with post-processing algorithms.’

Joseph Mait, a consultant at Mait-Optik, added: ‘Although the approach to optical design is not new, this is the first system that uses a surface optical technology in the front end and neural-based processing in the back.’

The imaging performance is achieved through a combination of the design of the metasurface features together with the post-detection processing.

The metasurface is made from silicon nitride in a process compatible with standard semiconductor manufacturing, which means it can be mass-produced at lower cost than the lenses in conventional cameras.

The group is now working to add more computational abilities to the camera, such as object detection and other sensing modalities relevant for medicine and robotics.

--

The University of Glasgow’s paper is titled ‘Time-of-flight 3D imaging through multimode optical fibres’. The research was supported by funding from Engineering and Physical Sciences Research Council (EPSRC), UK National Quantum Technology Programme (UKNQTP), European Research Council (ERC), Royal Academy of Engineering, and the Royal Society.

The Princeton University work was supported in part by the National Science Foundation, the US Department of Defense, the UW Reality Lab, Facebook, Google, Futurewei Technologies, and Amazon.