Self-driving cars and electric vehicles are where automotive companies are investing for the future. Both involve imaging, directly in the case of autonomous vehicles, and indirectly for quality control in the factories being built to produce electric motors. At the beginning of July, Volkswagen and Ford announced a joint venture, whereby VW is investing in Ford’s autonomous driving company Argo AI, valued at $7bn, while Ford will get access to VW’s electric vehicle technology. Ford says it will design and build at least one high-volume, fully electric vehicle in Europe starting in 2023 using VW’s Modular Electric Toolkit. VW started developing the electric vehicle platform in 2016, investing $7bn; it plans to build 15 million electric cars in the next decade.

The investment in e-mobility opens up opportunities for factory automation and machine vision. Thibault Bautze, sales manager at Blackbird Robotersysteme, a provider of scanning systems for laser welding, said installations of its technology is rising, now that the e-mobility market is ramping up. He also noted many laser system integrators and suppliers were showing copper hairpin laser welding at the recent Laser World of Photonics trade fair – the hairpins form part of electric motors.

Vision is used in many of these types of laser welding machines, according to Bautze, especially for laser welding where the scanner remains static. Here, cameras are used to detect a part’s position and align the weld seam to the geometry of the workpiece before welding. ‘This technology has been around for 10 years, but we see a huge market demand, especially for e-mobility applications,’ Bautze said, such as welding copper hairpins.

The workpiece doesn’t move when making a hairpin weld. It’s therefore efficient to image the entire workpiece before welding, to pre-program the path of the laser beam.

A Blackbird Robotersysteme scanner has its own camera; image processing is via the same user interface and software that takes care of scanner control. The user can directly assign a camera task to map the path of the beam.

The scanners use standard industrial cameras, working in the visible or near infrared wavelength range – imaging at any wavelength less than 1µm normally works, because the scanner optics transmit light at these wavelengths.

‘Image quality is important,’ said Bautze. ‘We have to consider all kinds of optical distortion generated by the scanner, especially when moving around in the scanner envelope. The camera is calibrated to operate across the entire working envelope of the scanner, to make sure that wherever the camera focuses in the x-y envelope of the scanner, the picture is sharp and we know exactly where in the image the laser will hit the workpiece.’

Blackbird Robotersysteme uses its own illumination for its imaging system: four LED stripes mounted close to the welding head with a filter on the lens to cut out ambient light.

‘Customers want one machine for welding different workpieces and parts from different suppliers,’ Bautze continued. ‘The conditions of the welding process might change over time. If this is a fully automated machine, it’s important that the image processing takes care of detecting the workpiece and aligning the welding process, or even skipping the welding process if the machine finds the workpiece is not what it’s supposed to be. It’s a last line of defence, because once you switch on a laser you’ve got a lot of heat in the laser cabin, and if the workpiece is incorrect, the beam could end up welding into the jigs, or generating back reflections that could damage the machine.’

Questions of quality

Quality assurance is a big part of automotive production. The first step is to document the process – the images of the workpiece, the coordinates of the weld seam, the laser power that was applied, the welding program, etc, all need to be recorded, Bautze said.

Inspecting the seam after welding depends on the application. Some welding processes are very robust, and so it makes no sense to inspect the finished part, according to Bautze. Or manufacturers will use different process monitoring methods, such as photodiode-based in-process monitoring.

Blackbird Robotersysteme has developed an optical coherence tomography (OCT) monitoring system for its welding head. Here, OCT is used for seam tracking during welding, which is especially useful for on-the-fly welding where the scanner is moving above the workpiece.

‘Seam tracking requires an edge or geometric feature, which, for fillet welds, is a feature that is seen in the z-direction, a change in the height of the workpiece,’ explained Bautze. The laser scanner can weld in all directions and over a large volume. Triangulation wouldn’t work as it records a height profile in one direction.

OCT is used to scan the workpiece and pinpoint the edge as the weld is made. In this way, the OCT images feed back to the welding head to steer the laser beam.

‘OCT was developed for remote laser welding, the most prominent application of which is welding car doors,’ Bautze said. ‘Car doors are huge, which is why you need a robot and scanner to get a good duty cycle.’

Car doors used to be made with overlap welds, where it doesn’t matter too much if the position of the seam experiences a small amount of jitter – there’s enough space to position the weld. But with the new generation of vehicles, aluminium is often used for car doors, and fillet welds instead of overlap welds – Bautze said switching from overlap to fillet welds could save a couple of hundred grams per door, which improves fuel consumption.

‘If you use fillet welds you need to hit the edge of the workpiece with the laser exactly,’ Bautze continued. ‘This is why you need seam tracking for the 3D scanner, because 3D scanners are needed to weld a door in a short time.’

OCT works in the kilohertz range between 50kHz and 200kHz. Blackbird Robotersysteme has sensors that can track seams at welding speeds of up to 8m/min.

The system images through the scanner optics coaxially. It is also able to scan the workpiece after welding to get a height profile of the weld seam for a quality assessment and documentation.

‘It’s very complex; we’re talking about on-the-fly welding with a six-axis robot, 3D scanner plus the OCT. It’s not a turnkey product yet; we’ve got customers working on it,’ Bautze said.

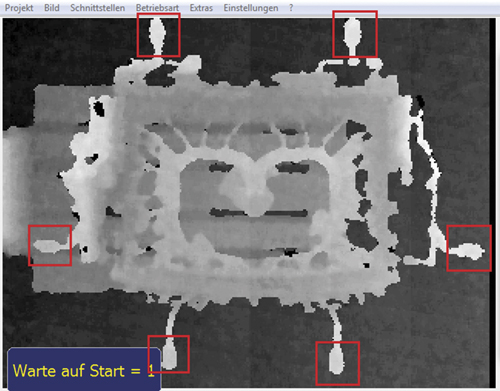

Machine terminal with direct inspection results of 3D data of a die cast cylinder head. Credit: VisionTools Bildanalyse Systeme and IDS

Blackbird Robotersysteme first showed OCT for laser welding in 2015. ‘There are not too many people able to work with remote laser welding, because it requires a lot of resources,’ Bautze added.

‘These companies have been working on this for four years and the first real business cases will be shown to the customers in one or two years – real applications and not just lab applications. It’s not just the OCT technology; it’s integrating OCT into the machine, the welding process, the customer, the workpiece – it’s a big deal for everyone involved in implementing it,’ he said.

Worth its weight

Aluminium is now a much more common material in modern vehicles, not just in car doors, but in many other die-cast parts designed to reduce weight, such as engine components, transmission housings, and chassis parts.

German system builder, VisionTools Bildanalyse Systeme, has developed a 3D stereo camera system to measure the geometry of die-cast components at the Mercedes-Benz plant in Esslingen-Mettingen. The system is mounted directly in the production line, with a robot picking up and positioning complex parts, such as cylinder heads in front of the camera, to make the measurements.

The system uses a 3D stereo camera from IDS, the Ensenso N35, along with a built-in light pattern projector to increase imaging accuracy. Image acquisition and processing takes between 0.3 and 1.2 seconds per component position.

Since the distance and viewing angle of the two lenses, as well as the focal length of the optics, are known, the Ensenso software can convert differences into known lengths by triangulation. It can determine 3D coordinates of the object point for each individual image pixel, and merges them into a 3D point cloud of the component.

Die-cast runner beans in 3D grey value levels Credit: VisionTools Bildanalyse Systeme and IDS

The Flex View projection technology integrated in the N35 model increases the accuracy of the measurement result. The position of the projector mask in the light beam can be shifted linearly in very small steps. Consequently, the projected texture on the surface of the component also moves and creates an additional structure to help with the reconstruction. Acquiring multiple image pairs with different textures of the same object scene produces a lot more image points, resulting in increased resolution. In addition to the resolution, the robustness of the data on difficult surfaces also increases, as the shifted pattern structures apply additional information to shiny, dark or reflective surfaces.

Another big use of vision technology in highly automated car production lines is robot guidance. An automobile manufacturing plant in China was able to improve robot handling of large parts by installing Teledyne Dalsa Genie cameras to guide the robot gripper – the imaging system meant the robot was able to grip each part every two minutes, as opposed to five or six minutes without vision.

New production lines for electric vehicles will require new automation solutions, which will involve robotics and machine vision. With the big auto makers ramping up for large-scale e-mobility production, the automotive market continues to be a leading user of vision technology.