The high-volume manufacturing environment that is the car industry is a perfect environment for the application of vision systems, with fast-moving machinery and a need to maintain production, but challenges remain in combining man and machine.

‘We do have vision systems we have implemented in the automotive sector,’ says Robert Pounder, Olmec’s technical director. ‘One example is colour inspection – where the camera is used to replace manual inspection, which has a tendency for error.’ In one particular application Olmec was involved with, a vision system was set up to inspect the position of coloured cables and their connectors on a PCB, to be used as part of a car’s electronic system. The cables had to be in the correct colour sequence and placed to within 1mm of where the connectors are soldered.

‘The operator positions the cables over the PCB and we have to image it constantly and track the cable movement. When the cable is in the correct position we then close some clamps that the operator’s finger is over. Then we can check colours are in the right sequence and let the part go onto the next station,’ explains Pounder.

The combination of man and machine in automotive is not only as an aid to the worker, but will also control how they do their job. ‘Ordinarily, you have to check without the operator there, but here the cameras are part of the workflow and the operator can’t move onto the next stage unless the camera says they have everything in the right position,’ Pounder says.

To give the worker the go-ahead the vision system has to check the cables’ colours, which in this application are reds, browns, blacks and greens. These might be a variety of distinct colours, but according to Pounder the cables are very reflective. For vision systems, this would normally require a high level of lighting. ‘From a lighting perspective, it is a bit of a challenge as machine vision illumination is quite different to what the operator wants,’ explains Pounder. Workers don’t want high-intensity lighting that is preferable for some vision systems. Pounder adds: ‘It requires quite a carefully considered approach to integrate machine vision with operators that are working in that area.’

Other examples of vision system applications for the automotive industry that Pounder gives include checking that the correct components are being use, quality control for engine assembly, and camshaft installation inspection. This uses machine vision to ensure the pistons used are the correct type before the engine is started for the first time. The wrong pistons can mean catastrophic failure for the engine. ‘It is so they don’t destroy the engine when they turn it over for the first time. It is all about error-proofing, so when an operator has the components they know they have the correct components. A lot of the automotive industry still has, for the areas we’ve worked in, a lot of manual assembly,’ says Pounder.

Track and trace

At Continental’s factory in the Czech Republic, electronic assemblies are produced and the industry mandates that each part is labelled with identifying data to track it through the supply chain. One of Continental’s end customers, an unnamed global automobile manufacturer, has specified a new requirement: verification of the position and print quality of each label on its subassemblies. To meet this requirement, Continental replaced its existing laser barcode scanner with a miniaturised smart camera. This camera is able to verify the position and print quality of a label.

The camera Continental chose was Microscan’s Vision Mini. The camera is 26 x 46 x 54mm in size, weighs 57 grams and has an RS-232 serial connection. Implementation of the system took place in three phases. First, feasibility studies identified the key parameters such as the software settings, hardware specifications, and lighting considerations. The camera has integrated lighting into the smart camera, but an external lighting source was also used to enhance the mark contrast on the monochromatic parts.

The system was installed on Continental’s assembly line for evaluation. This involved its mounting configuration and communication protocols. Because a previous scanner system had been used, the communication protocols needed to emulate the former system to allow for a smooth migration to the new for the station’s programmable logic controller (PLC). On the production line, once the label has been printed, the PLC sends a signal to the camera, which reads the barcode and locates the labels position.

Another example of vision system use in the automotive industry in the Czech Republic is at Bosch Diesel in Jihlava, a city in the south of the country. Bosch Diesel produces components for diesel technology and the company wanted to use machine vision systems to check product quality and to track the parts they produce. In one part of the Bosch factory, 80 barcode readers and more than 25 vision systems are used. Bosch wanted to improve the detection rates of its vision systems and turned to Cognex. Petr Koten is a Bosch Diesel systems division technologist. He says: ‘Ambient lighting is a factor that may have an impact on the functionality of the machine vision system. Therefore, it is necessary in some cases to use special external lights, such as infrared lighting.’

According to Cognex, the error detection rate improved from the original system’s 85 per cent to 99 and even 100 per cent. To maintain this detection rate, operators can wipe the cover of the camera lens two or three times per shift and according to Cognex no other maintenance is needed. The machine operators do not set up the vision systems, but with the vision system’s help their job is to identify the source of production errors on diesel pump bodies and other products. The images taken by the vision systems are recorded and this allows engineers to review this historical data to solve production problems.

Automotive components inspected at Bosch Diesel. Credit: Cognex

Another use of Cognex technology is at TRCZ, a subsidiary of the Japanese car parts maker Tokai Rika. Based in the north of the Czech Republic in Lovosice, TRCZ is located in a factory built in 2003. Its product range includes multi-drivers, safety belts and other components used to ensure the active and passive safety in vehicles. The main customers of TRCZ’s components are the European factories of Toyota, Suzuki, Ford and Volvo. TRCZ uses the work-in-progress movement system known as Kanban, where the next production operation station only receives parts from the preceding station when it is ready. The Czech subsidiary chose Cognex for an ID code reading system to enable Kanban.

Four fixed-mount readers have been installed in the factory to monitor the electronic traceability system for Kanban. The company can now keep records of the relevant processes in its operations.

One of the processes that can be used in automotive manufacturing and the supply chain is robotic bin picking, which uses vision to guide a robot arm in picking components. The PLB500 from Sick uses a 3D camera with pre-programmed software for part localisation and robot integration.

With its 3D robot eye, PLB500 is ideal for automotive robotic bin-picking tasks. The vision system is designed for parts that are from 150 x 150 x 150mm in size and larger. The PLB500 is able to locate parts of different shapes and sizes in a bin under varying environmental conditions.

The software for the 3D part localisation uses the part’s CAD data to understand the likely position and orientation of the object that is to be picked out by the robot gripper. This data enables precise and flexible visual guidance for the robots. Once identified and gripped, the robot gripper can feed the part from the bin to the next operation. Vision systems have and will continue to play an important role in the automotive industry. From inventory systems such as Kanban or labelling quality control and component selection during assembly, cameras and computers can aid workers in their goal to have zero defects on every shift.

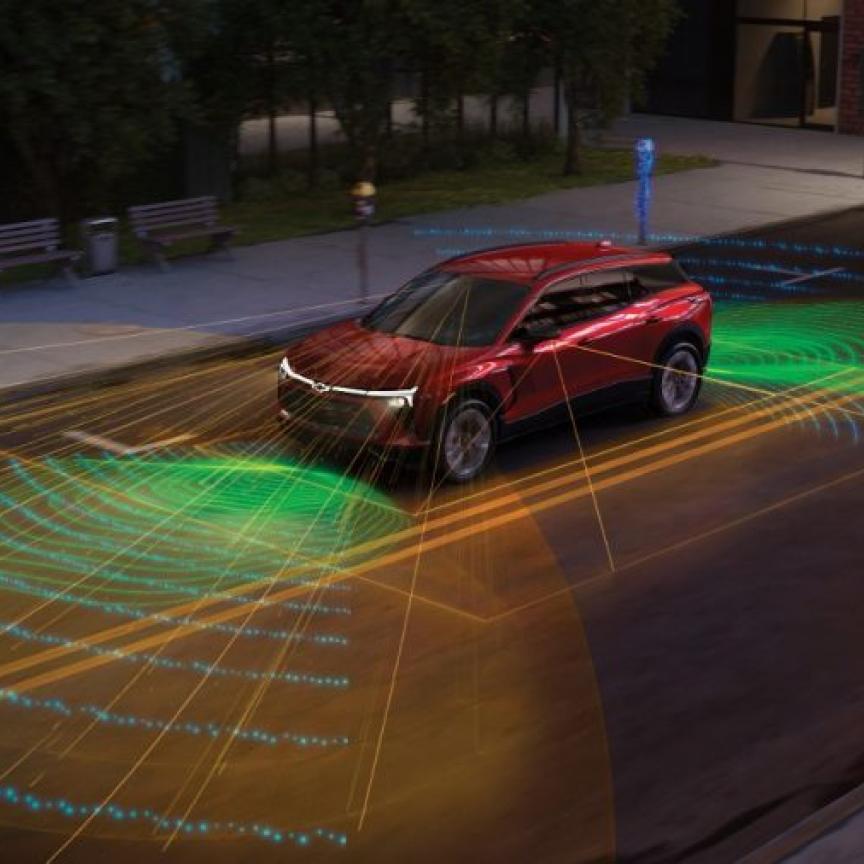

Modern vehicles incorporate a battery of sensors to make the car easier and safer to drive, from those that aid parking to automatic braking sensors. Toyota’s Central R&D Labs in Japan has developed a prototype high-definition imaging lidar sensor for its Advanced Driver Assistance Systems (ADAS). The time-of-flight 3D sensor, which has a 100m range, could be used in future Toyota vehicles for aspects like obstacle avoidance, pedestrian detection and lane detection.

Speaking at the Image Sensors 2013 conference in London in March, Cristiano Niclass of Toyota said that the imaging lidar sensor provides more comprehensive coverage of the road for driver assistance than the alternative methods of millimetre-wave radar or stereovision, both of which have drawbacks – millimetre-wave radar has good range, but low angular resolution and field of view, whereas stereovision has a better field of view, but only operates over a shorter distance.

The lidar sensor has a range of 100m and operates in bright sunlight, as well as in rain and adverse weather conditions, Dr Niclass said. The system uses a polygon mirror to direct the laser beam in six different directions and a time-of-flight sensor running at 202 x 96 pixels at 10fps. It is eye-safe, with a laser output of 21mW.

The system was tested under a background illuminance of 70klux and a sky illuminance of greater than 100klux, and recorded an error of less than 15cm at 100m distance at 10fps.

Dr Niclass said the sensor would recognise most objects based on the 3D data. He said that, at 10fps, there would be some motion artefacts when driving at speed on a motorway, for instance, but added that the system is designed for driver assistance rather than autonomous driving which is a lot more challenging.